The Twitter of News?

The Twitter of News?

Liz Gannes has written an intriguing story about the new version of Digg coming soon, saying it aspires to be "The Twitter of News." This is very interesting.

Liz Gannes has written an intriguing story about the new version of Digg coming soon, saying it aspires to be "The Twitter of News." This is very interesting.

Think of Twitter as "at least a dress rehearsal for the news system of the future." They gave it a diminutive name, easy to dismiss, but Twitter does something important. It makes composing and reading news easier than it's ever been.

But Twitter has been standing in the same place for a long long time. Why shouldn't Digg be able to catch up and pass them in a meaningful way? If they're motivated enough and good enough the answer is they should.

What's wrong with the tech industry that it lets Twitter stagnate so long without raising a serious challenge. Google didn't do it with Buzz or Wave. Yahoo could have done something with Flickr, but they're too disorganized. Even Facebook has failed to mount a realistic challenge to Twitter.

So why not Digg? Let's hope they have something good. Let's also hope they have innovated with their API, kept it simple, and perhaps offer developers a little more to play with than Twitter has.

It's time for some rock and roll.

Update: Zee sees it too. ![]() ">

">

I don't like wires but I do like ports

I don't like wires but I do like ports

My home computers have been on a diet. I've been retiring hard drives, ones that are under 1TB in size, and replacing them with new 2TB drives. It's been more than 1-for-2 because of the efficiency of larger spaces for backups and videos. And desk-clutter has been dramatically reduced. I can now put my printer on the desk with the computers and disks, router and 24-inch Cinema display. And in the living room, there's space behind the 46-inch Sony HD-TV for more hard drives should I ever want to add them, both on the power strip and on the USB hub.

My home computers have been on a diet. I've been retiring hard drives, ones that are under 1TB in size, and replacing them with new 2TB drives. It's been more than 1-for-2 because of the efficiency of larger spaces for backups and videos. And desk-clutter has been dramatically reduced. I can now put my printer on the desk with the computers and disks, router and 24-inch Cinema display. And in the living room, there's space behind the 46-inch Sony HD-TV for more hard drives should I ever want to add them, both on the power strip and on the USB hub.

So when I read on Engadget that Apple is getting ready to ship a new Apple TV with no ports at all, I thought how horrible, unless -- perhaps they've looked at the wire-mess issue and come up with a wireless way to connect desktop devices like hard disks, printers and external monitors. But I suspect that they haven't, and they believe that the "consumer" doesn't need any local storage.

Reminds me of a story a Jamaican cab driver told as he was driving me from Montego Bay to Negril. This was a long time ago, when my Jamaican uncle was still alive and I was still a smoker. As we drove through a village, he pointed out the new cottages, and said they had been built by the Cubans. They have all the modern conveniences, running water, indoor plumbing, even electricity. But the people don't want to live in them because Cuban-built houses don't have back doors.

I asked why do they need back doors?

I asked why do they need back doors?

He laughed and said, when the police knock on the front door, it's nice to have a back door. ![]() ">

">

I've said it before and it's worth saying again. Apple is building the Disney computer network. All the streets are clean, and the entertainment too. There's no porn here, and as long as there are no ports it'll stay that way. But computers are meant to be more than DisneyLand, they are meant to solve societal problems and help our species evolve. That means we must have freedom. And freedom and control are exact opposites. So I'd rather have wire-cluttered desktops and TV stations, than have Apple decide what I can and can't watch.

My top tweets in a JavaScript include

My top tweets in a JavaScript include

As you may know, when I find something interesting on the web, I push a link through Twitter in such a way that the number of click-throughs can be counted.

Every few minutes I build a page that ranks my 40 most recent tweets by the number of times they've been clicked on. It's kind of a crude method of determining relevance.

I've always felt this information should be displayed in an abbreviated way on scripting.com. I've now got it working. See below:

It's done using a "JavaScript include," by adding this little snip of code to a page.

<script type="text/javascript" src="http://static.scripting.com/misc/topdave.js"></script>

Nothing earth-shaking, just "nice to have."

My instant review of Twitter's new business plan

My instant review of Twitter's new business plan

There's something deeply unsettling about Twitter's new crackdown on publishers who run ads through Twitter. Actually there are many things that are unsettling about it.

There's something deeply unsettling about Twitter's new crackdown on publishers who run ads through Twitter. Actually there are many things that are unsettling about it.

1. First, it's not at all clear what the new policy is. They've said you have to share revenue with them if you run ads through Twitter. But is it an ad if I pass through a link to a page with an ad on it?

2. They said, in a phone interview, they'll only ask for money from people who do a lot of this. What about Huffington Post? We love them, they say. But they don't say they won't ask for a cut.

3. This new policy totally contradicts everything they've been saying about developers and publishers over the years. Okay they don't have to be consistent. What will they change next?

4. Twitter is gradually encroaching on the roles of its developers, publishers, even plain old users. Where does this end? My prediction: It ends with us all being couch potatoes. Watching the Britney Spears Channel or the Barack Obama Channel or the Comcast Cares Channel, and going elsewhere for the free-for-all that Twitter used to be.

5. Very ironic that Dick Costolo is the guy implementing this. His Feedburner company could have chipped in a percent of revenue to help RSS, because without our work to create an open format for him to build on, he wouldn't have had a business. His pitch sounds like the one I would have made if we had talked before he launched Feedburner.

6. The biggest difference between an open platform and a corporate-owned platform -- he can change the rules after we've all invested. With an open platform, you know the rules when you start, and they can't be changed later.

6. The biggest difference between an open platform and a corporate-owned platform -- he can change the rules after we've all invested. With an open platform, you know the rules when you start, and they can't be changed later.

7. Tomorrow I'm giving a talk at the NY Times about platforms for publishing. We will talk about this. Publishers can not depend on Twitter to be a steady platform that will be fair to them. Twitter might claim the right to charge the Times to push links to its stories through Twitter because they all have ads on them. If not today, sometime down the road. The Times owns its own printing press, for good reason. It should own its own digital press as well.

My instant review of the LOST finale

My instant review of the LOST finale

Finally got to watch the LOST finale.

Finally got to watch the LOST finale.

Of course this is a spoiler, so if you haven't seen it, please don't read.

1. I loved it.

2. I have a feeling they didn't explain any of what happened in the last six years, but who cares.

3. It makes me look at my friends differently, of course in a very nice way.

4. I look forward to seeing my father in a short while. And my uncles and grandparents. Should be pretty cool.

5. Remember, later on there is no now. But there is, right now, no time like now, so do it. Take care of your island, and when it needs saving, save it. And make friends and be happy cause it all works out in the end.

5a. Consider the possibility that this is heaven.

5b. Jack dies with a puppy at his side! OMG is that sweet. ![]() ">

">

6. A very nice way to do a TV show. Can't say too many of them take that approach. Very good job LOST. One of the nicest finales. Maybe not as clever as Six Feet Under, but definitely as feel-good as The West Wing.

7. Namaste!

Fact-checking the death of the open web

Fact-checking the death of the open web

The NY Times Magazine has a short but interesting article by Virginia Heffernan entitled The Death of the Open Web. Titles like that make me cringe. Time to explain why.

The NY Times Magazine has a short but interesting article by Virginia Heffernan entitled The Death of the Open Web. Titles like that make me cringe. Time to explain why.

What does it mean? How could you fact-check such an assertion. Was the open web ever alive? If not, how could it possibly die?

If you think it was alive, how do you tell the difference between an alive "open web" and a dead one?

People have said, curiously, that all kinds of things that never lived are now dead. I'd love to ask one of the people to say exactly it means.

Even for things that no one disputes are alive, there are debates about when death occurs. Is it when the living being stops breathing? When its heart stops? When there's no sign of brain function? The problem with each of those tests is that there are people who would have failed such a test who come back to life, who think and speak, move and write.

But with things like the "open web" that are wholly figments of the imagination, that no one even has a good definition of, much less an idea of what it means for it to "live," how do you decide when it's dead?

Bottom-line: Such statements are not verifiable, are meaningless. You couldn't fact-check it.

Reporters take such liberties, at a time when they must defend their value as the only objective fact-gatherers in our society. They even call themselves referees. But then they make up "facts" like this, and there's never anyone to ask a reporter, like you'd ask a politician, to defend an indefensible idea.

25 years of AOL

25 years of AOL

I'm headed south today on the only high-speed train in the US, to suburban Virginia to party with the founders of AOL. They have something to celebrate, the company they started 25 years ago is still here.

I'm headed south today on the only high-speed train in the US, to suburban Virginia to party with the founders of AOL. They have something to celebrate, the company they started 25 years ago is still here.

I was invited as one of the friends of the company, which is quite an honor. The badge I'll wear will be from Living Videotext, the company I started in the same timeframe. It is long-gone, though I'm proud to say that many of its ideas survive.

And it's cool that the northeast is so compact that you can make a ground trip between major cities in two or three hours and return the next day. Very different from the west coast.

Making stories beautiful, day 2

Making stories beautiful, day 2

I'm doing more light work on the Scripting News story template.

I'm doing more light work on the Scripting News story template.

The goal -- to make it look better on an iPad.

I use my iPad to read. Apple has also been taking some hits from Google lately, and amazingly (to me) I'm starting to feel some sympathy for them. I wish tech companies would realize that a small minority of users like the kind of bashing they do. Pretty sure that most of us would prefer to leave the zealotry out of it. It might be like American politics, with the fringe elements, "the base," getting lobbied by incendiary pitches, while the majority who are independent switch from product to product, or maybe use more than one. Reporters like bashing too, makes their jobs easier.

That was something we learned in the old days on the Mac, in the wars between Quark and Aldus. Most professional graphics people used both products. Each had their strengths.

Similarly, I will surely get a Google tablet when it comes out. I'm so interested in this area, I have to be among the first to know what it's like. But the bluster seems so incredibly off-topic. These are just computers, they're not religious causes. At least not to me.

Anyway -- I'm mostly writing this story because I need something to test against the new style sheet. ![]() ">

">

PS: Sometimes it seems there are only two Silicon Valley tech companies: The one formed from the ashes of Sun and Netscape (which is today's Google), and Apple.

PPS: I'm running the new Android release, 2.2, on my Nexus One. I'll be looking for new features on the train trip to DC later today.

Finales over the years

Finales over the years

Tomorrow night is the series finale of Lost.

Warning: Spoilers. Don't read if you're not caught up.

I've watched the show for all six years. My interest has ebbed and flowed. I don't think it's that great a show, nothing like The Wire or even The Sopranos or Six Feet Under, all of which I loved. But I'm faithful, and I want to know how it all comes out. In that sense this is the show that, to a degree that none of the others come close to, depends totally on how the whole thing is resolved.

I've watched the show for all six years. My interest has ebbed and flowed. I don't think it's that great a show, nothing like The Wire or even The Sopranos or Six Feet Under, all of which I loved. But I'm faithful, and I want to know how it all comes out. In that sense this is the show that, to a degree that none of the others come close to, depends totally on how the whole thing is resolved.

Lost has (it seems) been deliberately trying to lose us, its audience -- in kind of a clever joke with an implicit promise that the end will tie it all together. Or maybe most of it. As others have said, it's hard to imagine that, in one final 2.5 hour episode, it'll all come together. There will have to be some loose ends. Or so we think. ![]() ">

">

As the final season has been moving along, they very casually without any fanfare have been resolving questions. For example, we've been wondering if the boy in the jungle is Aaron, Claire's lost son. Nope -- it's young Jacob. And who will take over for Jacob? With no fanfare, again -- it's Jack. (Should have known -- Jack and Jacob -- almost the same name.)

People think the two Koreans are dead, but I saw Jin walking into a hospital room in the flash-sideways after his supposed death in the other track. And what about the two tracks? What the frack is going on there?

Probably the only series that built up more totally to its end was the reimagining of Battlestar Galactica. A lot of people weren't satisfied with that ending, but I was. (No gratuitous spoilers.)

The best finales? Well, number two was, imho, The West Wing. In the final episode of the final season, we see a change of power. Matt Santos is inaugurated as the new President, but we don't get to go with him on his adventure in power and leadership, but we know he's going to be a great President. Josh was right, of course. Instead we travel back to New Hampshire on Air Force One with Jed Bartlet. He opens a present from Mallory, the daughter of his best friend Leo who died (of which Jed says "I'm not the kind of person who has best friends.) It's the napkin that Leo wrote Bartlet for America on when he visited Jed to convince him to run, all those years ago, at the start of the adventure. At this point the tears, which were just starting to form and then roll, begin to gush and we sob with the great sense of closure we're getting. The wife, the former First Lady, asks the now ex-President, what he's thinking about. "Tomorrow." The camera goes out the window and scans the horizon. Sobbing and tears and a wonderful feeling. Credits roll. That's what I call closure!

But the best finale of all time (caveat: I wasn't a fan of Newhart or Seinfeld) was Six Feet Under's. Recall that every episode began with a death that we witness. Funny or pointless, or meaningful, personal or completely anonymous. The show explores all kinds of deaths, and how we deal with them. And there's the cast of people who work at the funeral home, and their families, friends and lovers. There's even a couple of ghosts! It's beautifully made, and there was no expectation of a great finale, no need for one, not like Lost or Battlestar. But they gave us one anyway. If you haven't, go watch the whole series, and then sit down and watch the final episode and be prepared to be in awe of the creative genius and how wonderfully they pulled it off.

New story template

New story template

There's a new template for scripting.com stories.

The goal of the template is to produce a page that looks good on a smartphone web browser, in a desktop web browser, and on an iPad.

Not totally sure what I'm doing here. ![]() ">

">

But this story is rendered in the new template, so let me know if it works where ever you might be reading it. And if you have an idea how to make it look better, let me know (the more specific the better).

Live-blogging from the Thursday meeting

Live-blogging from the Thursday meeting

Jeremy Zilar is showing us how to find great new NY blogs.

http://flatbushgardener.blogspot.com/

There are a lot of opera blogs in NY.

The more passive the subject the more active the community.

Flatbush Gardner has a fantastic blogroll.

Fucked in Park Slope (She got banned from Park Slope Parents, mail list.)

Bitchcakes Commute

I edit blog posts just like they're documents.

Brokelyn -- living cheaply in Brooklyn

The scoop on Google TV

The scoop on Google TV

I wasn't at the announcement today, but I'm very interested in this area, having gotten my TV through a computer for the last four years or so. Google TV sounds interesting, and I will certainly get one. But I had to zoom in on what made it different, so I issued a challenge on Twitter and we eventually got to the core difference between the Google approach and the Apple approach.

I wasn't at the announcement today, but I'm very interested in this area, having gotten my TV through a computer for the last four years or so. Google TV sounds interesting, and I will certainly get one. But I had to zoom in on what made it different, so I issued a challenge on Twitter and we eventually got to the core difference between the Google approach and the Apple approach.

With Apple, you can buy an Apple TV, as I did -- and found it seriously lacking, so I gave it away. The other choice, which is the one I use, is to hook up a Mac Mini to a regular big-screen TV, using a converter to translate DVI to HDMI, and using an optical cable to connect the sound so the digital converter in the receiver does the conversion. The theory is that the audio equipment does a better job than the computer equipment of digital-to-analog conversion.

I've been generally happy with this approach, but recently I switched to an old PowerPC tower Mac that I wasn't using. It has more horsepower than the Mini, and you need it if you want to do stuff (like browsing, emailing and tweeting) while watching a digital signal through an El Gato converter.

Okay so how does the Google device compare?

It's going to be built into the TV so it's one less box and one less set of cables. It should be cheaper. And the user interface will probably be slightly simpler. All of which are good reasons to do the product, and good reasons to get one. I hate the wire clutter that comes with using computers and am actively doing things to reduce the clutter.

Will it be Android or Chrome OS? Haven't gotten that far yet -- if you have data, please post comments or links.

What I'm really waiting for is Cannon to integrate Android and wifi with a nice camera. The camera on my Droid is pretty nice, but Cannons take better pictures. I'd also like a very simple programming interface so I can write my own software -- but there I have pretty much abandoned hope. This is Google after all, and they are the high and mighty priesthood, a prototype for the Cathedral made famous by Eric Raymond. I didn't think it was possible but they're more like Microsoft than Microsoft was. (At least Apple is honest about being proprietary and locking its users in the trunk.)

Ben Franklin would have understood the plight of Twitter clients

Ben Franklin would have understood the plight of Twitter clients

This is what Ben Franklin said at the signing of the Declaration of Independence.

This is what Ben Franklin said at the signing of the Declaration of Independence.

"We must all hang together, or assuredly we shall all hang separately."

It's an important idea, one that applies not only to revolutions and revolutionaries, but to independent software developers.

This week a lot of the Twitter client developers are announcing that they now support Google Buzz. Another corporate platform that's trying to overtake Twitter.

In the unlikely event that Google manages to pass Twitter, the chance that Google will bypass the client developers, as Twitter did, is 100 percent. That's how corporate platforms work. Right now Google needs the client developers to help them try to climb over a very high wall. Later the market will demand that they ship an official client, the same call that Twitter heard.

The secret for the client guys is that instead of investing in individual platforms owned by huge corporations, they must swallow hard and invest in working with each other. None of them is going to eliminate the competition by doing a better job of sucking up to corporations that own the platforms. Their only chance of winning is if a platform emerges that isn't owned by a corporation.

In other words, a platform with no platform vendor.

You know, like the Internet. ![]() ">

">

The havoc the XML purists wrought

The havoc the XML purists wrought

I had dinner last night with Jeremie Miller, the guy who bootstrapped Jabber, which became XMPP, which is now a widely-used transport mechanism. Jeremie is one of my heroes, he's not rich -- but he made a huge contribution to the richness of the Internet we now use.

I had dinner last night with Jeremie Miller, the guy who bootstrapped Jabber, which became XMPP, which is now a widely-used transport mechanism. Jeremie is one of my heroes, he's not rich -- but he made a huge contribution to the richness of the Internet we now use.

He's working on a new protocol. There are a bunch of new ideas under the covers that I find interesting and puzzling, but the net-effect is to provide the decentralized message transport we all want from desktop-to-desktop without going through a corporate-owned cloud, so we can avoid being monetized as crowd-sourced user-generated content with eyeballs. This is the DIY cloud, and it's about fucking time. Imho. ![]() ">

">

Like a small number of brilliant technologists, Miller has a story to tell -- and at first it was a strange story but then it became familiar. Jeremie is running away from XML because he had such a bad experience with the ever-escalating complexity being promoted by people who are less interested in applications and more interested in theory. To the theorists, XML is an infinitely expressive language, and the processors are magic engines that extract meaning from a messy maze of incomprehensible gobbledy gook. To the pragmatists, XML is a file format, a way of providing compatibility between applications written in a diverse set of applications and environments. XML provides a way of giving everyone choice without sacrificing the ability to talk to each other and be heard. Corporations looking to lock-in users favor the former approach because they can claim to be complying with "standards" and at the same time be impenetrable to competitors.

I fought these people, and to their chagrin, won. RSS never included the gobbledy gook. You could write a rudimentary RSS parser in a few hours and despite the slime thrown at RSS by BigCo employees, keeping an RSS parser maintained is a very simple job and doesn't consume much time. Hence RSS became and is a juggernaut, with huge flows of content, not controlled by the big corporations. Not that they haven't been trying. ![]() ">

">

XMPP is a different story. The schema guys won that battle, and apparently XMPP is very complex as a result, and there aren't a huge number of implementations. I say apparently because I don't know -- I have never implemented XMPP, and have never set up a server. I wish I could. I now think I understand why I can't.

This is why I find the arguments of the JSON-only proponents either lazy or dishonest. I don't know which it is. They say that XML is too complicated, but that's wrong. Just ignore everything but elements, attributes and namespaces. That's what RSS does, and it's very simple. If you're designing protocols that are meant to be widely adopted, this is a trust issue. The central node in a centralized system, such as the one being proposed by Facebook, can easily afford to parse many dialects, without incurring much cost. We do it in our aggregators, small developers like Newsgator and Ranchero, I do it in River2 and I'm just a person. Surely the Great Facebook can do what we small fry do? So what is their angle? What are they complaining about? I don't trust would-be platform vendors who won't accomodate all possible developers, esp those who use a language that's so deeply installed as XML is.

In contrast, Amazon supports XML in their web services, quietly and competently. I can't imagine them saying one day "It's too much work for us to keep supporting XML so you all have to rewrite your code now if you want to keep paying us for the web services you use." If that ever happened I'd rush to my broker and short Amazon stock, aggressively, cause they would have lost their minds.

I said it to Jeremie and I'll say it to anyone who will listen. If you're proposing that other people develop on your technology, you should be very mindful to keep the path well-groomed, and don't add cliffs to the trail. We'll see that they're there and go some other way.

Hypercamp in SF this weekend

Hypercamp in SF this weekend

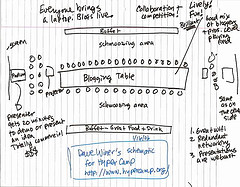

When I read the schedule for the Hacks/Hackers camp in SF this weekend I immediately wanted to be there. The goal of the weekend is to come up with some new iPad apps, but I think what's more important is the open newsroom format that they're exploring. When it's all said and done, I think it'll be far more important an innovation than any software that comes from the meeting.

http://unite.hackshackers.com/

I'm going to an event in Virginia on Sunday so I can't be in Calif this weekend, unfortunately. If you're going to be there, get ready for some fireworks. Putting a lot of writers and coders into a newsroom environment is going to cause some mind bombs to explode.

What if Skype had an API?

What if Skype had an API?

I started keeping Skype running after reading a TechCrunch piece explaining how screen sharing works over Skype. I needed exactly this functionality to support a few friends who are new to blogging. To be able to see what's on their screen instead of relying on their description makes a huge difference.

I started keeping Skype running after reading a TechCrunch piece explaining how screen sharing works over Skype. I needed exactly this functionality to support a few friends who are new to blogging. To be able to see what's on their screen instead of relying on their description makes a huge difference.

Using Skype makes me wonder what if they gone in the direction that Twitter did, making it possible for status messages to scroll by in a River of News. It's amazingly close to what I consider the ideal configuration. A centralized name service with peer-to-peer updates.

But Skype was embedded in a large company, and who knows what kinds of pressure they were under. It certainly wasn't being run in an entrepreneurial way. Which led me to wonder how different things would have been if Skype had an API. A simple one that allowed you to push a message to another Skype user, and be able to catch a message flowing into the Skype client. Could have been great.

It's a lot like rssCloud or what Dropbox is doing. Sooner or later there will be a unifying layer that handles all real-time traffic through an open API that doesn't rely on central servers any more than the Internet itself does. It's coming, you can feel it.

Update: Skype does have an API.

What makes a movie good?

What makes a movie good?

Over the last few days I've seen two movies, Harry Brown and Robin Hood.

Over the last few days I've seen two movies, Harry Brown and Robin Hood.

I thought Harry Brown was good, but didn't care for Robin Hood.

That got me thinking -- what is it about a movie that makes me like it, and the answer was surprisingly simple. First, the movie has to make me care about the characters. Second, they have to be true to who they are.

It's nice if something interesting happens in the movie, but not required. For example, I like Vicky Cristina Barcelona, because the characters are so interesting and lovely and so is the scenery. Movies can be like paintings. It's not the plot that matters so much as the colors and the feelings they inspire.

It also helps if I can project myself into the place of one or more of the main characters. What would it be like to be them? What would I do in their place?

If all this comes together, you stop feeling so much like you're in a theater watching a movie, and more like part of an unfolding story.

And if there is a resolution, as far as I'm concerned, it doesn't have to be a happy one. I respect movies that have a bitter or sad ending and pull it off. I also don't need a sense of closure. I like movies that leave you wondering at the end what happened. If it's annoying that's fine. I don't mind if a symphony ends in discord, as long as it makes sense. Real life stories often have unhappy outcomes, no need to tie off all the loose ends in movies, but I don't mind if they do.

I guess it all comes together in what we call suspension of disbelief, and I think it's different for everyone. That's why some people liked Robin Hood, and giggled and cheered at moments when the movie called for it. I didn't -- all the time I was aware I was in a theater, not in a story. Thinking about the time, and what I'd do later, and wanting to check my email.

I often criticize a movie for being predictable, but that's actually kind of bogus. I don't really mind if a movie is predictable. I only really care when the other things aren't working for it. If they didn't do enough with the characters to get me involved, to care one way or the other, what happens to them.

People have compared Harry Brown to Gran Torino -- but I couldn't figure out whether that movie was Clint Eastwood's tribute to Dirty Harry and the Man With No Name, or a mockery. I loved his early movies because his characters were interesting, consistent and deep. It's not so much that you come to care about the Clint Eastwood tough guy -- you don't, but you understand him. I didn't understand the main character in Gran Torino. He seemed shallow and unreal. Maybe if they had Ed Asner in his Lou Grant role play the vigilante, or the old man in Up, it might have made sense to me. ![]() ">

">

Rebooting the News #52

Rebooting the News #52

Jay is traveling to Toronto for the fantastic Mesh10 conference, so Jeremy Zilar of the NY Times filled in as guest. ![]()

We talked about blogging at the Times and blogging in NYC.

http://mp3.morningcoffeenotes.com/reboot10May17.mp3

A teeny bit of Angry Birds was thrown in for fun. ![]()

Harry Brown

Harry Brown

Went to see Harry Brown today, excellent movie, much better than Gran Torino.

But the same basic idea. ![]() ">

">

What if Zuck invented the web?

What if Zuck invented the web?

David Weinberger asks a thought-provoking question on his blog.

It would make an interesting debate. I don't believe it's anywhere near as simple, or black and white, as he portrays it.

I spent some time this week with Joe Hewitt who was in NY and one of the things we talked about is how stalled the web is. Joe is a brilliant young technologist, with an impressive track record. He works for Facebook.

If Joe wants to make a beautiful app he has to write for a locked-up company-owned API. There is no platform that isn't owned by a company that is as rich as what he wants.

We were making richer software than the stuff you can run in the web browser 20 years ago. The only problem was that it didn't network well. Had Apple been more like TBL, and not tried to lock up their networking software, to make it so hard to develop apps that ran on their network, there might have never been an explosion of networked creativity on the Internet -- there wouldn't have been a need for it.

There are lots of ways progress gets held back. One of them is the W3C, the organization that owns the standards of the web. It's controlled by huge companies, so that forces new companies like Facebook to do their innovating outside of the web. It forced RSS to happen outside the standards bodies. And there are pitfalls to gifting your creations to the universe, you get exploits like Feedburner. You could devote your whole career to studying the might-have-beens, and you wouldn't get any closer to knowing how it all should work.

Whether you like him or not, Zuck is a creative guy. He pushed his creativity out through the only channels we made available to him. You can't blame him for that. It's sub-optimal, it might even be wrong -- but what else could he have done?

Having learned my lessons from blogging, RSS and podcasting, and TBL's experience with HTTP and HTML, I would have done the same thing. It was naive to believe that just giving away the formats and protocols would leave me free to keep innovating. Doesn't work that way. Once a market develops, the product is taken away from the creative people and owned by the VCs and managers at the big tech companies.

I'm giving a talk at the NY Times later this month, and this is what I'm going to say to them. Don't be fooled by the hype of the tech industry, the rules aren't what they say they are. It's much more cut-throat. I give Zuckerberg a lot of credit for putting his plan out there for all to see. That's a lot more than Google or Apple has done.

Code as photo-op

Code as photo-op

There's an article in today's NY Times about a group of four NYU comp sci students who are embarking on a great adventure to create a decentralized version of Facebook, where the data is under the user's control.

There's an article in today's NY Times about a group of four NYU comp sci students who are embarking on a great adventure to create a decentralized version of Facebook, where the data is under the user's control.

This goal is right on, it's something I've written about many times, it's why the Internet is such a breath of fresh air compared to the locked trunk schemes of tech companies. That the article appeared in the Times is an indication that they share the aspiration of many users to be free of control of not only Facebook, but the whole tech industry. A worthy goal for the students, a worthy goal for the Times.

But whoa Nellie, just a minute -- nice picture -- but there isn't any code here!

The news people are always doing this. Pumping up a bunch of nice-looking young men (almost always men) to be David in an epic Boy Kills Boy battle to the death with Goliath. These beautiful young men are going to flail against the machine and emerge victorious. Or more likely be forgotten like so many who came before.

Example: A 2005 Times article by John Markoff about a new startup that aims to commercialize podcasting. Didn't happen.

It's irresponsible of the Times to run such a piece. There must be a dozen projects all over the world that are much further along than this one. It's definitely not good for users to focus so much attention on a project that doesn't exist, instead of ones that do.

To be clear, I'm on their side. I hope they're successful. But instead of setting the expectations so high, I would prefer to see them bite off a smaller piece of the pie. We could really use a great open source Twitter client, a smaller more pragmatic goal, and much more likely to be realized in the three months they've set aside to Change The World.

Last week I wrote about Coach Walsh and the 49ers and setting expectations a notch below reality. Let's step back from this and wish these young men well, and hope they can help the rest of us achieve some small independence from the tech industry. And let's hope next time a big publication like the Times writes puffery like this, that some editor points out all the pitfalls of such a project and gets them to stifle the hype, just a little.

Note: I am a visiting scholar this year at NYU, in the journalism institute. I am remarkably close to this story, but have never met the students.

Confronting the next taboo: Single

Confronting the next taboo: Single

Elena Kagen is not gay, she's single.

Elena Kagen is not gay, she's single.

We've decided that gay people are okay. Maybe now we'll decide that single people are too.

A related issue comes up from time to time, esp in the Village -- where people make a wide variety of lifestyle choices. Should gay people have the right to marry? Of course they should, I say. If two people want to make a commitment to each other, how can anyone else say they shouldn't? It's hypocrisy for conservatives who say the government must stay out of people's lives to then turn around and say that marriage is between a man and a woman. It's okay to believe that of course, but it's not okay to deny people rights if they don't conform to your beliefs.

Here's what I do have a problem with -- the government being in the marriage business, and the government giving tax benefits to people who are married.

I don't sign the petitions that call for amendments allowing gay marriage. It can be awkward because people assume this is because I'm against gay people. It's not so. I'm against the government having a stake in the relationships between people, or lack thereof.

So I think it's great that Elena Kagen is straight and single, and may well become a Supreme Court justice. It's about time that people who say they want diversity of representation get to stretch a little.

Lessons from the demise of Newsweek

Lessons from the demise of Newsweek

Earlier: "What's killing the news business: A belief in corporations."

Earlier: "What's killing the news business: A belief in corporations." ![]()

In yesterday's RBTN, we talked about the demise of Newsweek. Couldn't something more creative be done with the momentum behind the name? But the people at Newsweek could never contemplate what will rise in its place.

We tend to believe corporations solve problems but the evidence says otherwise. Corporations rise from entrepreneurship, get established, defend their turf, and give way to new corporations that rise from entrepreneurship, etc etc.

Microsoft, Apple, Oracle, IBM, DEC, Compaq, HP, Lotus, Sun, Netscape, Google, Twitter. Over the years they come and go, and they never solve problems. They die off and the next generation of tech companies solve them.

Newsweek can't hire a corporate executive to reboot Newsweek. But you could start a great blogging network around the Newsweek name, just like they're starting them around names like Tumblr, StackOverflow, Disqus.

We tend to throw out goodwill in tech, but not all ventures do. Right now outside my apartment in the West Village, they're tearing up Bleecker Street. How many times has that street been dug up and repaved since it was a path on property owned by an 18th century American named Bleecker? When they're finished there will be new fiber running under the street bringing me the news, and you my blog posts, even faster. Just as Bleecker's creation can be transitioned, so could Newsweek's. There's no need to break the tracks. But the past has to willingly give way to the future.

Enough of this How will the news business survive? mess. That's not the question. The question is How can we make news work much better given the new realities? That's a great question and the guy who answers it is the next leader of the news business.

The answer will not come from a corporation.

However, on the pages of the newspapers you hear voices of people who believe that corporations solve problems. They tell tales that never come true. "We have to find a solution" but the problem as they state it has no solution.

A couple of weeks ago a dear man named Guy Kewney died. He did something unusual, at least in my experience. He announced his impending death on Facebook, in typically clever Kewneyish way (you had to suck your breath in when you realized what he said). Then he lived out his last days in conversation with those of us who cared to listen. His conversation was realistic. He didn't talk about how he had to find a solution, he knew that the body of Guy Kewney was going to cease to exist in a very short time.

Guy Kewney had the instincts of a reporter because he was a reporter. It was time for him to make way for the next group, and soon enough (too soon) it will be our time to make way, and on and on. But the news will still flow, the same way traffic will flow on Bleecker Street after the current mess is cleaned up.

A 140ish limit

A 140ish limit

I learned something about the 140 character limit in the last few days, as I've experimented with a piece of software that may or may not see the light of day.

I learned something about the 140 character limit in the last few days, as I've experimented with a piece of software that may or may not see the light of day.

We all know that a communication environment with a character limit is a unique thing. I haven't sung its praises because it's enough-praised by others. As an experienced writer, I'd rather self-impose limits, I can decide what's enough. There are some useful ideas that can't be expressed in 140 that could be expressed in 180, 250, or 360. The problem with inbetween-sized ideas is that it's not worth the trouble to create a blog post for them, and Twitter doesn't work if you stream together a sequence of tweets.

Anyway, here's what I learned. I will self-impose a 140ish limit if I make the text display really big, so that a web page can hold one idea. It's the "ish-ness" that's important. It's a soft limit, up to the writer. For some ideas I need to go over. Not all, not even most. But when it's necessary, it's nice.

Iron Man 2

Iron Man 2

Monday afternoon after doing RBTN, I often go to the movies, if there's anything interesting at the theaters. Today I went to see Iron Man 2. A brief review follows with no spoilers.

Its a sequel to an action movie. There can be no surprises. First they set up the ensemble, and what a group it is! Mickey Rourke, Scarlett Johansson, Gwyneth Paltrow, Samuel L Jackson (who lookes like Stowe Boyd these days). Don Cheadle. Gary Shandling as a US Senator.

Okay now we know who's in the movie -- there's a bad guy, and a couple of weaklings, some good guys. Same old schtick. It builds to a final fight scene in which the good guy (and his sidekick and girlfriend) triumphs and the bad guys look foolish and some of them die. You can see it coming from the first scene. That's why I said no spoilers.

Along the way as little happens as happens in Alice in Wonderland. Actually even less. Actually much less. Just a bunch of talking and people riding in cars and planes, and hanging out in offices, and watching old movies. Sounds like what most of us do in an average week. So much for escapism. Okay one guy breaks out of prison. Big deal.

But -- you expect in 2010 from a blockbuster franchise, some great freaking special effects that burn huge money. They're just not there. At the end you kind of think maybe they're going to steal the great scene from the Matrix Reloaded when Neo swoops down and snatches the Keymaker and Morpheus off the top of the truck as it's exploding, but nope -- nothing even remotely in that ballpark. (I searched for video of that scene, but Neo doing his Superman thing is the closest I could find.)

But -- you expect in 2010 from a blockbuster franchise, some great freaking special effects that burn huge money. They're just not there. At the end you kind of think maybe they're going to steal the great scene from the Matrix Reloaded when Neo swoops down and snatches the Keymaker and Morpheus off the top of the truck as it's exploding, but nope -- nothing even remotely in that ballpark. (I searched for video of that scene, but Neo doing his Superman thing is the closest I could find.)

I really came close to walking out on this movie, I was that bored.

I noticed as the credits rolled by that Larry Ellison is in the movie, playing himself. I missed that entirely. I did see the "Oracle" name plastered all over the end of the movie. Maybe Ellison was one of the supposedly evil robots who actually looked like actors in stupid outfits more than they looked like impressive special effects.

One thing I loved about the movie is that it starred my hometown, Flushing, NY. They even mention the 7 train and the Willets Point station (is it still called that?). Amazingly, Flushing has become kind of cool. How the frack did that happen? ![]() ">

">

The original Iron Man was worth it. It had a great ending. The second one is worth skipping.

Afternoon sellout notes

Afternoon sellout notes

I'm drinking coffee in the Au Bon Pain on 8th St near Broadway, but didn't check in on Foursquare cause I hear they might get bought by Facebook (heh that was a joke, I'm actually lazy). Hope they don't but I could understand why they might, cause the neck of the woods they reigned over is about to become the province of much bigger companies with far more users.

I'm drinking coffee in the Au Bon Pain on 8th St near Broadway, but didn't check in on Foursquare cause I hear they might get bought by Facebook (heh that was a joke, I'm actually lazy). Hope they don't but I could understand why they might, cause the neck of the woods they reigned over is about to become the province of much bigger companies with far more users.

Brief comment on all the people quitting Facebook over the changes in their privacy policies -- I'm not quitting. I understood that they could do this and didn't put any info on the site that I didn't consider public. I always saw it as a publishing platform. If anything it frustrated me that it wasn't all public from the start.

The one company that I really care about is Dropbox, and I know almost nothing about them. I hope they don't sell out, what they have is too good for Google or Facebook. If I were a Twitter board member I'd seriously consider merging with them. It could really shake things up. On the other hand, the technology that Dropbox has mastered is so important that there should be an open source equivalent that we can all deploy, so we can have Dropboxes for sensitive info we don't want to share with them.

I'm not really worried about Dropbox and patents, given that we had much of what they have in 2002 in Radio's upstreaming feature. They did a better job of implementing it, so hat's off to them. But there's plenty of prior art.

I put this idea out there because we should be looking at this. And wouldn't it be grand if Dropbox, realizing they had a shot at creating something huge, threw some fat on the fire while they were ascendent and open sourced their implementation right now.

A pen for an iPad?

A pen for an iPad?

My friend is a cartoonist and she got an iPad and loves it.

She of course wants to draw cartoons with it.

Drawing with a finger is too funky.

Anyone know if there's a way to hook up a pen for drawing?

Update: Make Mag has a how-to. Of course. ![]() ">

">

Michael Gartenberg writes: "Pogo makes a line of capacative pens that work with ipad. Available on Amazon."

A poor man's OAuth?

A poor man's OAuth?

I know OAuth pretty well since I implemented a client a little over a year ago. It was hard work, but I got there, with help from one of the designers, Joseph Smarr. This version of OAuth, 1.0, worked something like Flickr's authentication system, which I had implemented a few years earlier. Wasn't sure what was gained with the new complexity, but Twitter had said pretty clearly they were moving to OAuth, and I was actively developing on Twitter, so I figured I'd better support it.

I know OAuth pretty well since I implemented a client a little over a year ago. It was hard work, but I got there, with help from one of the designers, Joseph Smarr. This version of OAuth, 1.0, worked something like Flickr's authentication system, which I had implemented a few years earlier. Wasn't sure what was gained with the new complexity, but Twitter had said pretty clearly they were moving to OAuth, and I was actively developing on Twitter, so I figured I'd better support it.

Since then there's a new OAuth that is incompatible with the one I implemented. I'm confused now about which one Twitter will use, but I don't care because in the interim I stopped developing Twitter apps. I've decided that when and if Twitter pulls the plug on non-OAuth apps, I'll just let my apps break.

The other day I had to write some OAuth code for another web app, and it was a bitch -- my Twitter-OAuth code didn't work with the new app. So we're in the place you don't want to be, with compatibility expressed in terms of apps instead of standards. It should be enough to support OAuth, but it's not.

The new OAuth is an attempt to simplify it, and if that's what we're doing, why not go all the way and make it Really Simple™ (sorry). Hey it's an open format, so anyone is allowed to have an opinion. So here goes.

Imho FriendFeed had a good idea. Instead of giving an app your password, you give it your remote key -- a random string they generate for you. So now you've authorized apps to access your account without giving them your password. No protocol change, we're still using Basic Authentication. The user's life gets a little more difficult, but so little it's hardly worth mentioning. They just have to understand this idea of a remote key. And it stays truly simple for developers.

But that's not as good as OAuth because it doesn't allow the user to selectively turn off one app while leaving the other apps alone. (Note that you always had the power to turn off all the apps, just change your password, and boom none of them can get into your account.)

Credit card companies have had a solution to this for a long time. Consumerist has an excellent survey of the field. Each bank calls them something different, but they're all the same idea. CitiBank calls them virtual account numbers. They're credit cards that are good only at a single merchant. If you don't like what that merchant is doing you can just terminate the virtual account and they stop misbehaving right away, leaving all your other online accounts alone. All the virtual accounts funnel into the same account you've always used, but you can do business without giving away that account number. Now when someone steals your identity, they've got nothing. Isn't this exactly what we want from authentication? And it can be implemented without creating any new hurdles for developers.

Credit card companies have had a solution to this for a long time. Consumerist has an excellent survey of the field. Each bank calls them something different, but they're all the same idea. CitiBank calls them virtual account numbers. They're credit cards that are good only at a single merchant. If you don't like what that merchant is doing you can just terminate the virtual account and they stop misbehaving right away, leaving all your other online accounts alone. All the virtual accounts funnel into the same account you've always used, but you can do business without giving away that account number. Now when someone steals your identity, they've got nothing. Isn't this exactly what we want from authentication? And it can be implemented without creating any new hurdles for developers.

Credit card companies use these numbers to protect their money, so why isn't it good enough for us to protect our users' identity?

Moving target APIs

Moving target APIs

I'm working on glue to connect to a new web app that I really like and want to try developing for. But they're already warning me that they are planning to change things about their OAuth implementation. This is happening before I have any investment in the platform.

I'm working on glue to connect to a new web app that I really like and want to try developing for. But they're already warning me that they are planning to change things about their OAuth implementation. This is happening before I have any investment in the platform.

That's what's wrong with corporate platforms. And no I don't want to say which one it is because I like the company and the product. It's the process that I don't like.

As a developer, writing glue to connect to an app is entirely overhead. While I'm doing this work I'm not producing a single feature that a user cares about, and therefore I don't care about it.

Getting on board one of these corporate APIs is usually a bad idea, because eventually someone inside the company decides it's a (believe it or not) good idea to break apps and guess what -- the apps start breaking! :-(

Watch, you'll see, some idiot developer will point out in the comments that it's good to break developers. They won't have even read the post so they won't know I'm already laughing about how stupid this whole thing is.

Ray's Pizza makes me happy

Ray's Pizza makes me happy

All they make is pizza, no sandwiches, dinners, no salads. And I've tried dozens of other NY pizza's, and none of them approaches West Village Ray's. Their cheese tastes better than cheese should taste. The sauce is just-right, and there's just the right amount of it. The crust is good enough to eat without any topping, and it's served incredibly hot.

I always start with a fork and knife, and eat it like a main course. As I approach the middle, it's cooled off enough and the slop of it is just manageable to fold the NY way and eat like a sandwich. One slice is all you need. I get one every Thursday on my way to the meetup at Cooper Sq. I look forward to it the way I look forward to a favorite TV show.

Is NYC the next tech mecca?

Is NYC the next tech mecca?

Whether or not NYC is the next tech mecca, my moving here is not a reflection on that, one way or the other. I am here for mostly personal reasons. It's where I grew up. It feels like it's where I belong right now, so this is where I am.

But people ask me the question all the time, and I've had enough time to think about it.

First point, if we believe NYC is the next tech mecca, is it wise, on NYC's behalf, to boast about it?

In business I like to follow the example of San Francisco 49ers coach Bill Walsh, one of the winningest coaches in history, and also one of the most modest. If you asked Coach Walsh did he think his team would clobber the Dolphins or the Bengals in the Super Bowl, he would tell you the other guys were the best team to ever take the field in the history of the sport. The Niners would be lucky to score a single touchdown against such a formidable opponent.

In business I like to follow the example of San Francisco 49ers coach Bill Walsh, one of the winningest coaches in history, and also one of the most modest. If you asked Coach Walsh did he think his team would clobber the Dolphins or the Bengals in the Super Bowl, he would tell you the other guys were the best team to ever take the field in the history of the sport. The Niners would be lucky to score a single touchdown against such a formidable opponent.

They do the same thing in politics, always set the expectation a notch below reality. We should do this in tech too. Let the other guy do the blustering. Make sure your team and its supporters know that victory is going to be hard. The other guy wouldn't have gotten so far if he wasn't great. Let the other guy know he has your respect. Maybe he'll relax. ![]() ">

">

Now, with that in mind -- it pays to read Matt Mireles's piece that explains why Silicon Valley is still the place for bright entrepreneurs with a world-changing idea to build a team and get financing. He's probably right about that. But it may not be the best place to incubate the next layer of technology, in fact it almost certainly isn't.

I have some experience with that, because in 1979, as a bright young person probably a lot like Matt, I moved west from Madison to Mountain View to seek fame and fortune as a software entrepreneur. The road was a lot rougher than I thought it would be, but within ten years I had founded a company and participated in an IPO and realized the dream that a lot of people still have today, which I think is good.

But here's the important part of my story, that's relevant to whether or not Silicon Valley is the place to be, right now, to participate in the next level of change.

When I started my second company, in 1988, also in Silicon Valley -- the industry was approaching a level of maturity that, in tech, warns of a looming implosion. I was too young and inexperienced to know this, but the signs were everywhere. A few years before if you had a good idea, you could ship a product, promote it, build a user base, and find liquidity. Now the dominant companies had grown so big they were starting to choke the ecosystem. And the entrepreneurs who were showing up were less the bright-eyed engineers with big ideas, and more of the carpetbagging MBAs with pyramid schemes. Gotta say the VCs typically went for the MBAs. The era of the engineer, if it wasn't over, was certainly waning.

But even then, everyone thought the future of the tech world was being hatched in Silicon Valley. The only problem was, with the benefit of hindsight, the future of the tech world was actually being hatched in Switzerland.

And before that, the seminal product of the tech industry, VisiCalc, was being hatched in Cambridge, MA.

And in the next implosion, the rebooting tech was being developed by curmudgeons who didn't look like MBAs and sure didn't sound like them, so the bright guys of the Valley missed it. (I'm talking about blogging and social media, of course.)

So Silicon Valley may be the place you bring your revolution, once it's fully hatched, but the revolution itself is (apparently) hatched elsewhere.

So that says to me that NYC, with its incredibly huge pool of fresh talent, which is its advantage -- this is the largest metro area in the United States, and one of the largest in the world -- shouldn't be thinking about competing with Silicon Valley. They do what they do very well for a reason.

We should be thinking about how we can work with Silicon Valley, when the time is right.

In other words, I don't think we're going to stop taking planes to SFO anytime soon.

What we can do here, though, is iterate on the vision for the next level of tech, which I feel intuitively involves the humanities and media, as much as it will involve memory, batteries and input devices. When we need financing, we can turn to local sources, or we can get on that plane and teach the investors of the west coast how to come to JFK, which they all want to do anyway. I think there are enough people here now with the right idea so that the chance of booting up the next level is pretty good. It is not in any way a certainty -- there are plenty of other geographies with a lot going for them.

What we can do here, though, is iterate on the vision for the next level of tech, which I feel intuitively involves the humanities and media, as much as it will involve memory, batteries and input devices. When we need financing, we can turn to local sources, or we can get on that plane and teach the investors of the west coast how to come to JFK, which they all want to do anyway. I think there are enough people here now with the right idea so that the chance of booting up the next level is pretty good. It is not in any way a certainty -- there are plenty of other geographies with a lot going for them.

So the answer to question is -- no NYC is not the next tech mecca. But it could be. ![]() ">

">

Dr Brown's too sweet to be true

Dr Brown's too sweet to be true

The local supermarket delivers so I order big quantities of soft drinks and canned goods that way, easier for me, esp since my car is out in Queens and I'm here in Manhattan.

I noticed on their website that they had Dr Brown's Diet Cherry and thought what a treat, I love cherry soda, and Dr Brown is an old NY name, let's give it a whirl.

So I get out a can, pour it into a glass over ice, have a sip and think "Man this tastes so sweet, I bet it's not diet." Sure enough, they gave me the sugar drink, 180 calories per can. No way Jose!

1.0 logic from a 2.0 guy

1.0 logic from a 2.0 guy

Scott Karp of Publish 2.0, who I've met and is a nice guy, says the kind of thing we rail against on Rebooting the News.

Scott Karp of Publish 2.0, who I've met and is a nice guy, says the kind of thing we rail against on Rebooting the News.

Scott Karp: "Apple could generate $1 billion in iPad revenue this quarter. Wonder who is smarter, Steve Jobs or his critics? You do the math."

Okay I did the math and got the answer: Does Not Compute. ![]() ">

">

1. I assume I am one of the critics he's talking about. It's true, I make less money than Apple does, in fact, I don't make any money at all, I spend money. I've been blessed by a few "liquidity events" in my life, enabling me to work on what I want to work on. It has always been thus for me, even when I was young, I adjusted my cost of living so that I could work for myself. Does this make me less smart than Steve Jobs? I don't see why it would.

2. Is it possible for someone to make a lot of money and also do things that are bad for the environment? Things that deserve criticism? Should we give everyone who makes a lot of money a free pass -- making them immune from discourse? The idea is silly on its face. In fact some business models, ways of making money, are based on cashing in public value in the ecosystem. Did Exxon fully pay for the ecological disaster of the Exxon Valdez? Did they earn a lot of money since then? Did they pay for the cost of the war in Iraq which has kept oil prices higher than they probably would be otherwise? Is this good? (Imho, no, it is not.) Should we criticize BP even though they surely made more money last year than 99.9999999 percent of us? I'm sure Scott didn't mean to say that, after all -- I think he believes in the power of journalism.  Most journalists make less money than the titans they cover (at least I hope so). Money ain't everything Scott, and it sure does not equate to wisdom.

Most journalists make less money than the titans they cover (at least I hope so). Money ain't everything Scott, and it sure does not equate to wisdom.

3. I feel Apple is screwing us in some ways and blessing us in others. I bought Apple stock and have held it through the bad times and now am profiting in the good times. Yet I think it's good news that the FTC is reigning in their power re the developers. Not only good because it is morally a good thing, but also good for shareholders. I buy all their products, although sometimes I protest. I feel like I'm using the Disney version of a computer with the iPad, and I prefer punk rock (although I like Disney movies too). I'm a lot like Jon Stewart, not quite a fan boy because I think I see when they are wrong, but certainly a fan.

So come on Scott, this is an old-fashioned 1.0 homily, the kind of thing my grandfather used to say for crying out loud. We now live in a world that's been messed up by unrestrained business models, possibly to the point where the planet will soon not sustain human life. Isn't it time to get rid of the shortcuts and use our minds when thinking instead of falling back on folksy sloganeering?

Response to Matt's important post about the Twitter API

Response to Matt's important post about the Twitter API

Matt Mullenweg, the founder of Automattic and the lead dev on WordPress, has written an important post about the Twitter API. Lots of interesting observations and more than a little telegraphy here! Well-worth a careful read.

Matt Mullenweg, the founder of Automattic and the lead dev on WordPress, has written an important post about the Twitter API. Lots of interesting observations and more than a little telegraphy here! Well-worth a careful read.

The conclusions:

1. The Twitter API had a chance of becoming a defacto standard, but it didn't happen, for a variety of reasons.

2. It sounds like there's a WordPress client coming from Matt's company (unless there already is one that I'm not aware of) that works similarly to the Twitter clients.

3. It sounds more and more like Matt sees Twitter as competition. Or maybe this is wishful thinking on my part.

As a founder of this market, and someone who has the ear of most of the participants, I'd like to make a recommendation.

1. You have to support twitter.com, and this means working with whatever changes they make to the Twitter API. At this point they have the most users and the most influential users. Not supporting twitter.com, for most of the client developers, seems like it's not an option.

2. You should also support an open protocol, and by open I mean replaceable and simple. I explained that in yesterday's post in response to Chris Saad's piece. There really only one way to go here, and that's a good thing -- RSS 2.0 as the format, and its <cloud> element to add the realtime component. It's decentralized, doesn't depend on any of the BigTechCo's, and isn't owned by anyone. It also isn't controlled by the W3C or IETF, which means it cannot be corralled by the BigTechCo's. It's easy to support, doesn't require a massive R&D budget and is not a moving target, and cannot become a moving target.

You must have a route-around of the dominance of the big vendors if the smaller independent and open source projects are to have a chance in the market.

RBTN #50

RBTN #50

We're recording the 50th episode of Rebooting the News at noon Eastern today. ![]()

What would you like us to talk about?

Jay posted a request for topics, and there's already quite a response. Add your two cents. Or war stories. ![]() ">

">

Update: Here's the MP3 of today's podcast.

Replaceable

Replaceable

Chris Saad is onto something, he says "Open is not enough, time to raise the bar: Interoperable."

Chris Saad is onto something, he says "Open is not enough, time to raise the bar: Interoperable."

But I think the idea that Chris is really searching for is Replaceable.

Think of it this way. There is no root to the web. There is no home page. No place you have to go first before you go anywhere else. Same idea -- there shouldn't be any center to the graph-of-everything. That's where the bar should be set. And Facebook ain't even in the ballpark.

It's nice that Facebook has graph.facebook.com, except it should also be XML. And they should be willing to point into graph.scripting.com, and graph.whitehouse.gov and graph.yourserver.org. Anyone should be able to operate a graph. And of course we should be able to point into graph.facebook.com, and not just at the root, but into any bit of data they expose.

Then everyone is on an equal footing. I don't care if their format was approved by the W3C, all that means is that it'll be a kitchen-sink format with all the BigCo's getting to screw with it until interop isn't even remotely possible for anyone without a $100 million development budget. Screw the corporate-owned standards bodies. Instead be open in the only way that truly matters -- replaceable. And to be replaceable the format has to be simple. That way you have to always be earning your market, by providing superior value, functionality, performance, price and trust.

Then everyone is on an equal footing. I don't care if their format was approved by the W3C, all that means is that it'll be a kitchen-sink format with all the BigCo's getting to screw with it until interop isn't even remotely possible for anyone without a $100 million development budget. Screw the corporate-owned standards bodies. Instead be open in the only way that truly matters -- replaceable. And to be replaceable the format has to be simple. That way you have to always be earning your market, by providing superior value, functionality, performance, price and trust.

If there's any lock-in at all it doesn't matter if you call it open.

PS: To Chris, white-on-black text is really hard on older eyes. Be kind to everyone and stick with black-on-white.

It's my party...

It's my party...

In the early days when I was starting up DaveNet, which led to this blog a few years later, I would write the most self-indulgent essays in the days leading up to my birthday. They were also my best.

In the early days when I was starting up DaveNet, which led to this blog a few years later, I would write the most self-indulgent essays in the days leading up to my birthday. They were also my best.

I finally wrote a book proposal this week. I think it's a good one. It's a book I'd like to read, so I'm pretty sure I'll like to write it. My agent said "you know they'll say it's just narcissism and all that," and I'll say (as I said to him) show me some writing that isn't.

And if you choose to write on your birthday, that's pure narcissism. ![]() ">

">

Narcissus, the Greek, sat by the river and gazed at his reflection, in awe of his beauty, so fixed he froze in place. Why move when you've found perfection?

One nice thing about the far west side of Manhattan. After a hot day the middle of the night cool breeze smells of the ocean. Not just any ocean but the ocean of sleepovers at my grandmother's Rockaway house, as a kid, a long long time ago.

In Howl's Moving Castle, a movie I saw for the first time a couple of days ago, a teen girl named Sophie has a spell cast on her by a witch. It transforms her into an old woman in an instant. It's exactly the spell that life casts on all of us! (I suspect the author realized this.)

Sophie quickly learns that being old is harder than it looks. And in some ways more satisfying. "When you're old all you want to do is stare at the sea," she says. "It's so strange, I've never felt so peaceful before." I know what she's talking about. I too find I can just sit and watch and feel great.

On the other hand, Bette Davis said old age is no place for sissies. My father quoted her often, in his last year, which was a very hard year indeed.

Kurt Vonnegut in his memoir, at 82, refuses blame for the awful state of the world. "It's not my fault," he protests -- "I just got here!"

Fifty-five. 55. Not 44, not 33, not 22, not 11.

55 birthdays. Almost two months of birthdays. You'd think I'd have it down. Every one is different but in some ways they're all the same. It's the one day when the party is about you. It's all yours. If you get choked up and cry, it's okay -- it's your day. All yours.

You can call me old for this one day -- I feel old, in a bittersweet way. But tomorrow it's back to normal, just plain sweet, and if you call me old I'll whack you with the cane I don't have. At least not yet. ![]() ">

">

Booting up Hypercamp in NYC

Booting up Hypercamp in NYC

I've wanted to try doing a hypercamp for a long time, and it seems we'll likely do it in NY as an outgrowth of the Thursday evening meetups we're having at NYU.

I've written about Hypercamp many times, so I'll just provide a bit of context here, for background, you can read the previous writeups.

If I hadn't been traveling extensively in the beginning of April we would have done one around the iPad shipment.

It's another attempt to reboot conferences, this time both with format and content. The assumption is that a meaningful event has happened, it's very fresh, and there's diverging opinion among experts. The minute-by-minute "breaking news" period is over, but there's still a lot of data that most experts don't have, that needs to be shared.

It's also meant to replace press conferences and fill-in for the newsrooms that bloggers don't have. Eventually the Hypercamp is a permanent fixture, and sources and reporters, whether they're pro or amateur, gather regularly to share information and viewpoints. Because the facility is wired for Internet and video it also replaces CNN as the go-to place when news is breaking.

The Hypercamp in NYC would likely server the media industry, fashion and finance. The Hypercamp in DC would focus on government, the one in SF on tech, etc etc.

I hear that out in Calif Jay is talking about rebooting news at a journalism conference at Stanford. This piece fits right into that.