What to watch for in Twitter's photo-sharing feature

What to watch for in Twitter's photo-sharing feature

Pictures in Twitter should be easy. On an iPhone, there should be camera functionality built into the Twitter app.

The steps to publish a photo should be as follows:

1. Take the picture.

2. Enter a title.

3. Click OK.

The should demo this, and everyone should be impressed if it makes it into their mobile apps.

I also expect this to be heavily silo'd. That there will not be a way to do this through the API. So we'll still be stuck including the URLs in the tweets, and under pressure from other Twitter users to give it up and use Twitter's client. This will be a battle akin to the argument over retweeting. Many if not most users preferring the old metadata-less way, to the new system-friendly way (I prefer the latter myself). But I think in this case the pressure will be the other way -- to use the Twitter, Inc method of photo-sharing with hidden URLs and fatter tweets.

I also expect this to be heavily silo'd. That there will not be a way to do this through the API. So we'll still be stuck including the URLs in the tweets, and under pressure from other Twitter users to give it up and use Twitter's client. This will be a battle akin to the argument over retweeting. Many if not most users preferring the old metadata-less way, to the new system-friendly way (I prefer the latter myself). But I think in this case the pressure will be the other way -- to use the Twitter, Inc method of photo-sharing with hidden URLs and fatter tweets.

If Flickr were paying attention, they'd be have a timeline and allow you to follow photo-rivers on other services. And keep it wide open via the API, which works pretty well. Instead their home page is a disorganized mess. By going into pictures, Twitter gives Flickr one last shot at remaining more than an archive of the photography we were doing in the last decade. And not so much a big part of the way we do it in the future.

I'm sure I'll think of more questions that are likely to be resolved with Twitter's announcement, and things to ask about and think about.

Is there an education bubble?

Is there an education bubble?

This question is out there, and it's a good question to ask.

Originally posed by angel investor Peter Thiel.

Should everyone be getting a college education? Does an education actually do anything for the student?

Since I've been associated with universities twice, first with Harvard and now with NYU, and given my long standing in the tech industry, I feel qualified to have an opinion about this. (Not that lack of qualification ever stopped me from having an opinion.)

And by the way -- I was at Harvard when Facebook was hatching there. And I was at NYU during the Diaspora crazyness. I had absolutely nothing to do with either of them. Entirely coincidence.

Here's what I think. College is a good thing. It can teach your mind a kind of discipline that you won't get in the real world. It can also teach a lazy arrogance, the thought that people in the commercial world somehow aren't as smart or clued-in as those in academia.

But lately, the reverse thought has been happening, as the tech world with its startup frenzy is sucking out some of the best students, with the dream that they can be the next Zuck. A few of them can, but there's only room for a few. For the rest, whether or not all this is actually a bubble, it will be a bubble for them. Most won't achieve fabulous wealth before they turn 30.

But lately, the reverse thought has been happening, as the tech world with its startup frenzy is sucking out some of the best students, with the dream that they can be the next Zuck. A few of them can, but there's only room for a few. For the rest, whether or not all this is actually a bubble, it will be a bubble for them. Most won't achieve fabulous wealth before they turn 30.

I think we should aim much higher in academia. We should aim to recreate the environment that made the Internet itself spring into existence, in academic institutions. I was also lucky to be at a very good computer science school during the boot-up of the Internet, and got to play a very very small role, mostly as an observer and student teacher. I learned from reading the code the Unix guys were writing. And marveled that something so simple, so clear, could do so much. More than teaching, the experience inspired me -- it showed me how great you can be with these tools. And I like to think I achieved some of that in the career that followed.

I think we're heading into another period, like the 70s and early 80s, when it's time to take a fresh look at how we do things. The venture world will never do that. I've spoken with several VCs about this idea over the last few years. They only understand one kind of investment, something with a short fuse that produces almost immediate results. The kind of work that creates revolutions requires more iteration, patience, trial and error and lots of bootstrapping -- creating tools that will be used to create tools to create tools and on and on. Out of that soup comes not just one or two commercial products but whole generations of them.

I wrote a piece in January about educating the journo-programmer. That's just one slice of it. Overall the challenge for education is to excite and inspire our young people to do great things with their lives. Sure it's wonderful to have economic resources, but it's even better to have a mission.

Flickr becomes important again, imho

Flickr becomes important again, imho

Sounds like it's totally confirmed that Twitter will announce that they do photos, this week, at the D9 conference in California.

Sounds like it's totally confirmed that Twitter will announce that they do photos, this week, at the D9 conference in California.

Meanwhile Flickr is still the way I upload pictures. I like that it's owned by Yahoo, a sleeping giant, living large and dangerous.

A lot of good people, people with years worth of photos on Flickr, will wonder what happens now.

Twitter going into photos will give us pause to think.

Me, I know what I'm doing -- I'm going to make a new home for my photos on the net, not inside a corporate blogging silo.

I'm totally okay with keeping the photos at Flickr. And continuing to keep them there. But I want them somewhere else too. Just in case. ![]()

The importance of perspective

The importance of perspective

A quick story...

I had a major liquidity event that had to be covered by tech analysts, even people who didn't like me. And when they wrote their pieces, they generally said that they didn't, in one way or another -- and one even said why.

He said I turned on him.

I was puzzled because I don't remember doing that.

What does that even mean, and do people ever actually turn on others?

What does that even mean, and do people ever actually turn on others?

Or is it just the way it seemed to him? That was the ephiphany I had a few days ago. To him, that must be what it looked like. Because he was doing the same things he was always doing, nothing changed with him. But one day, after me writing a bunch of glowing stuff about his work, I wrote something negative. That must have been what he saw as the "turn." And it makes perfect sense from his point of view.

From my point of view, something completely different happened.

I had been under the impression that his conference was run a certain way. That people speaking were chosen for merit, that they didn't pay for the right to speak. I learned that this wasn't true. The keynote speakers had all paid for their slots. And when they spoke they delivered ads, targeted directly at the people in the room. There were even calls-to-action -- Act Now -- this offer expires, etc etc. It was so completely obvious, and was not even slightly disclosed. Maybe the guy putting on the conference thought it was so obvious that he didn't need to disclose. The disappointment I felt was felt by others who were quite vocal in the hallway conversation.

So I wrote a piece basically saying I didn't like the conference. Big deal. I know it wouldn't matter much. People would still go, and over the years the conference grew and grew, and as far as I know they continue to do the same thing. I don't go to the conference because I don't like my time being used that way. I don't like watching commercial TV, but I put up with it, because at least it's honest. This was both a waste of time and dishonest.

The point of this piece though is that to each of us, the event was totally different. I just figured out how.

Facebook is building a browser

Facebook is building a browser

TechCrunch reports that Facebook is hiring a team in Seattle to work on "desktop" software. If you think about it for five seconds, it's got to be a browser. Of course they're building one, just as clearly as Google was working on a browser before they launched Chrome.

TechCrunch reports that Facebook is hiring a team in Seattle to work on "desktop" software. If you think about it for five seconds, it's got to be a browser. Of course they're building one, just as clearly as Google was working on a browser before they launched Chrome.

If you want to play at the level that Google and Facebook are, you have to be able to customize the user experience, beyond what you can do in HTML. That means you have to have a browser.

Facebook probably has a camera in development too. And every other thing that can be simplified by assuming you're only communicating with Facebook. And maybe clicking on an occasional link to a news story or catalog outside Facebook.

Update: I did a quick podcast to explain why Facebook is a force for vertical integration.

Iran's idea -- not far-fetched, at all

Iran's idea -- not far-fetched, at all

There's an idea floating around, via the Wall Street Journal, that Iran is contemplating cutting their Internet off from the rest of us. Basically creating a private Internet that's not connected to anyone outside Iran.

It's very possible. If there are only a few of points of entry into the country, they must control those.

The miracle is when things are compatible, that's why the Internet is so great. If you tried to get all the countries or all the companies to agree to a standard that would allow all their private networks to interoperate, it would never happen. Only when a bunch of idealists in academia thought it would be neat if they hooked their networks up could it happen. Before anyone else was interested.

The reimagined Battlestar Galactica had a lot of great ideas. They had all these old-fashioned items around the space-age starship, a reminder that this all happened so far away that time is practically meaningless. As far away as they are in time and space, they area lot like us, in many ways. The Galactica starships didn't have the Internet. It's not that they didn't know how to do it, they did -- they had to disconnect because it was an attack vector. In one episode they had to temporarily turn the Internet back on, as a desperate measure. And sure enough, within seconds, probably milliseconds, the virii started attacking. It was only a matter of time before their databanks were corrupted. Of course they saved the day, and lived to fight the Cylons once again.

The reimagined Battlestar Galactica had a lot of great ideas. They had all these old-fashioned items around the space-age starship, a reminder that this all happened so far away that time is practically meaningless. As far away as they are in time and space, they area lot like us, in many ways. The Galactica starships didn't have the Internet. It's not that they didn't know how to do it, they did -- they had to disconnect because it was an attack vector. In one episode they had to temporarily turn the Internet back on, as a desperate measure. And sure enough, within seconds, probably milliseconds, the virii started attacking. It was only a matter of time before their databanks were corrupted. Of course they saved the day, and lived to fight the Cylons once again.

Iran is under attack in exactly the same way. Their techs can't control the viruses we're sending at them. This means two things:

1. They will eventually wise up and take their Internet offline.

2. And watch out, they have hackers too. And if we can do it to them, you bet they can do it to us.

In other words, enjoy the freedom of the Internet. Because it's quite possible you won't have it much longer.

Butt hurt, Fleet Week bike ride

Butt hurt, Fleet Week bike ride

Yesterday's ride was great, but gave me a case of buttus sorikus, otherwise known as "sore butt." Which sounds like sorbet, but is not.

Yesterday's ride was great, but gave me a case of buttus sorikus, otherwise known as "sore butt." Which sounds like sorbet, but is not.

That and Fleet Week made midtown on the Hudson a mess, so I turned around at the Intrepid and made it a shorter ride.

I must get out earlier, avoid the crowds.

Here's the map. 51 minutes. 7.2 miles.

11 mile ride, with a blowout!

11 mile ride, with a blowout!

I was feeling all kvetchy until I got on the Hudson River trail, when a tail wind lifted me into high spirits.

I was feeling all kvetchy until I got on the Hudson River trail, when a tail wind lifted me into high spirits.

Didn't think much of it as I rolled over somethign that went POP! -- until I was parked at the corner of Hudson and 10th waiting for a light when my rear tire blew out. Just standing there. Sounded like a gun.

Luckily there was a bike shop two blocks away, friendly folk. Not only did the tube blow, but whatever it was that I rolled over at the beginning of the ride shot a big hole in the tire itself. Replaced both for $40. Whole new ride.

This was the first hot weather ride of the year, and I gotta say this is an activity done best in the heat. 11 miles later, I feel great! ![]()

Map: 11.10 miles, ride time -- 1 hour 29 minutes.

Archiving my Flickr photos and drawings

Archiving my Flickr photos and drawings

One of the things on my todo list for a long time is to get systematic about backing up my Flickr pictures.

I've finally got an app that seems to be fairly bulletproof, I wrote it carefully to check on itself. There's no point having a backup if you aren't fairly sure that you've got everything. And all the metadata is backed up too.

Now I'm running the app. By my calculations it should take about 15 hours.

While I'm waiting, I wrote a little readout page that shows the last ten pictures that have been archived, in reverse-chronologic order. It's kind of interesting to watch them roll by. Especially because it picks them in random order, so there's no telling where or when you're going to go next.

http://myphotos.scripting.com/recentphotos

Feel free to watch along with me. ![]()

Should I be linking to the NY Times?

Should I be linking to the NY Times?

Interesting sequence of tweets this morning.

First, I pointed to a NYT piece on the last minutes of the Air France jet that crashed into the Atlantic.

I don't remember why, this time, I prefaced the link with NYT. Doing that got me a reply from Dan MacTough.

Great idea to prefix links to NYT with "NYT" for those of us worried about the stupid monthly cap.

That isn't why I put the prefix there, but it's an interesting thought nonetheless.

I point to the NYT a lot. I'm not feeling the pain because I got a freebie subscription from Lincoln. I gather Dan did not get the freebie.

I point to the NYT a lot. I'm not feeling the pain because I got a freebie subscription from Lincoln. I gather Dan did not get the freebie.

I pointed to the Times in this case because there was a lot of coverage of the recovery of the black box, and I chose the Times because I thought they'd give me less sensational bullshit than the others (and no annoying interstitial ads, it's often why I don't pass on links to Salon, for example).

We need to get organized about this stuff. The Times paywall has been here long enough such that we should have answers to questions like this by now.

Themable, Day 2

Themable, Day 2

On Tuesday I posted a note here asking for help in making worldOutline.root themable.

It's been so quiet since because I got the help I was looking for, from Dave Jones and we've been busily going back and forth on the changes to make the app open to designers. And just now delivered the new parts that make it work.

It's been so quiet since because I got the help I was looking for, from Dave Jones and we've been busily going back and forth on the changes to make the app open to designers. And just now delivered the new parts that make it work.

The howto that explains the update.

Here's a link to a page in my worldoutline site.

Now to get some designers to play in the new playground. ![]()

PS: Dave is playing with themes. Nice!!

Let's make this app "themable"

Let's make this app "themable"

I got to an interesting place on a project to display outlines as HTML directories.

As usual, my design is very simple, but I want to -- as always -- open it to be themable by designers. This means that all display elements can be controlled from styles. I already have the architecture built and running for styles and it's very cool.

What I don't have is the basic HTML structure.

That's where you, the friendly geek designer, come in. ![]()

Here's the screen shot.

I'm deliberately not showing you the web page because I don't want you to retch in disgust when you see how I did it.

Do it right. Mock it up in static HTML and I'll take what you come up with and put it back in the app, with full credit of course.

And then when it ships, as open source, it will be themable, and we can all have a blast building these beautiful directory sites that link to each other.

Anyway that's the idea! ![]()

PS: If you happen to be running a worldOutline server, this is how it works behind the scenes, and an idea of where we're going with this.

HBO GO (with Verizon) -- doesn't go

HBO GO (with Verizon) -- doesn't go

I'm a legal HBO subscriber. I want to watch a program on HBO on my computer or iPad, so I went to the HBO GO website.

Clicked on Verizon to sign on. Entered my username and password. It says it's wrong. Over and over.

Used the same username and password to sign on to the Verizon site. Works fine.

That was 45 minutes ago. I've been on hold, after being transferred three times. I tried their virtual chat assistant but it couldn't understand that I don't have a voice line at home, and kept insisting that I enter that number. (I have a Verizon cell phone.)

That's where I'm at right now.

Still holding.

Thank you for your patience. You will be assisted momentarily. Please stay on the line.

Your call is important to us. Please continue to hold for the next available representative.

Thank you for waiting. Please continue to hold for the next available representative.

Your call is very important to us.

I gave up after an hour.

PS: I was of course calling on the Verizon phone and accessing the website on FIOS, both of which are part of the account that subscribes to HBO. That they somehow couldn't correlate this info and just give me access to the site, is fairly pathetic. And of course the show I want to watch is still sitting there on their website. Where I can't watch it.

PPS: I made a recording of the Verizon hold message.

Update: June 1 -- They got me an account that is valid for HBO GO. I'm in. ![]()

Apache for Poets

Apache for Poets

One of the 11 ideas for Hackathon projects that also would be good ideas for incubator entrepreneurs is an Apache with a user interface that a mortal could love.

One of the 11 ideas for Hackathon projects that also would be good ideas for incubator entrepreneurs is an Apache with a user interface that a mortal could love.

I just wrote an email to a developer explaining the idea, and thought I should do that openly.

Most developers approach the problem from the wrong direction. They think: How can I make the power of Apache understandable to a regular human? If you try to do that you will confuse people and fail in your mission. Apache cannot be understood by a regular person. You have to love a special kind of torture to have the patience to learn how to configure Apache.

Instead, start from the other direction. Pretend you're a person who understands the web and how a computer fileystem works. Now go edit the httpd.conf file for Apache and make it so that it serves the content of a folder and nothing more, and does it without opening any security holes.

That's a what I think of as a "zero conf" web server. You don't have to do anything to set it up. Install the app, copy the files into the special folder and bing! -- you've got a web server.

Now start adding options, very carefully, always asking this question: Would a person who understands the web and file systems understand this feature. If not, sorry, it doesn't go in.

An example. Maybe I don't like your choice of the folder to serve from. Give me a command to have it serve from a different folder.

Another feature that's a no-brainer: associate a CNAME with a folder. As many as you like.

Redirects? Can you explain that to your user? Can you do it in a way that it doesn't add complexity for the user who doesn't care about them? I'd say redirects are a borderline feature, for a version 1.0 at least. But I'd try -- because redirects are really useful. (And filesystems have the idea of an alias or shortcut, so it's not crazy-ass foreign to normal people.)

Redirects? Can you explain that to your user? Can you do it in a way that it doesn't add complexity for the user who doesn't care about them? I'd say redirects are a borderline feature, for a version 1.0 at least. But I'd try -- because redirects are really useful. (And filesystems have the idea of an alias or shortcut, so it's not crazy-ass foreign to normal people.)

Have I ever seen such a product? Yes, in fact I have. The Mac, in the mid-90s, had MacHTTP. And we had an FTP server that worked this way. How did we lose our way? Unimportant. Now let's get back on track! ![]()

Browsers without address bars?

Browsers without address bars?

In the last few days an idea has taken hold, at least in public, that the future might be browsers without address bars.

In the last few days an idea has taken hold, at least in public, that the future might be browsers without address bars.

I have mixed feelings about that.

When I got my first demo of the web in 1992 or 1993 my first reaction when I saw http://etc in the browser's address bar was "People won't do that." It's one of those times I was glad I was wrong.

Just two years later, driving on Highway 101 past the Marin Civic Center in San Rafael, I saw the URL of its website in huge letters on the marquee in front. Totally visible to all the cars going by on the freeway. The idea went from baffling to mainstream in just a couple of years.

Nowadays I can tell almost anyone to type a URL in the address bar of the browser, and they'll probably have some idea how to do it. They might not know what a browser is. And they might go to Google to search for facebook.com, but they find their way there.

Another story. I was taking a taxi from Montego Bay to Negril in Jamaica in 1988 or so. My driver, a friend of my uncle's named Indian (all Jamaicans have second names like that), pointed out a community with dozens of identical bungalows. He said Cubans built them. They have all the modern conveniences but no one wants to live in them. "They don't have back doors," he said. I didn't get it at first.

Another story. I was taking a taxi from Montego Bay to Negril in Jamaica in 1988 or so. My driver, a friend of my uncle's named Indian (all Jamaicans have second names like that), pointed out a community with dozens of identical bungalows. He said Cubans built them. They have all the modern conveniences but no one wants to live in them. "They don't have back doors," he said. I didn't get it at first.

In a way the address bar is like the back door. It's the way you can be sure you can get somewhere even if all the powers-that-be don't want you to go there. It's just a feeling. I don't want to give it up, for me, or for anyone else.

I'd like to keep the address bars just as they are.

Open letter to incubator-entrepreneurs

Open letter to incubator-entrepreneurs

Just had an idea. I know at TechStars/NYC the next crop is preparing proposals. This is a good time to try to influence you! ![]()

I have a list of 11 ideas for new tech projects. Some of them, I believe, have a serious chance to make money.

I have a list of 11 ideas for new tech projects. Some of them, I believe, have a serious chance to make money.

If you want to do one of the ideas on this list, or if one inspires you to an even better idea, I want to know about it -- before you submit your proposal. It's okay to contact me. I would like to help you put the plan together. (Assuming you have a serious chance of winning.)

Once it's been through the incubator process, assuming you're successful, I'd like a chance to invest.

I don't know why I didn't think of this sooner. ![]()

PS: It doesn't just apply to entrepreneurs with TechStars or even NYC.

PPS: Maybe we should call this early-stage-mentorship. Or entrepreneurial mentorship. Or lazy entrepreneurship. Or venture visions. Whatever it is, I have a roadmap, and if your work fits in, I want to participate.

Apple should protect its developers (and users)

Apple should protect its developers (and users)

I read this piece by the EFF about Apple and patent trolls and defenseless developers.

The gist: Apple requires developers to use an Apple-provided service in order to sell their apps in the App Store. The developers are getting sued for patent infringement for doing this. Apple itself is exempt from being sued because they did a separate deal with the owners of the patent.

Apple should defend the developers. It's not just a moral thing -- it's smart business. Assuming they want to have developers.

It struck me as parallel to another situation where Apple is making the wrong call.

All of a sudden Macs have malware. I've seen the attack that's rampant, and it gave me a sick feeling, because it reminded me of the reason I switched back to the Mac in 2005. Windows had become a horrible mess of malware. Microsoft's position was the same one that Apple is now adopting. Leave the users, most of whom think they're immune to malware (Apple told them so!), to fend for themselves.

All of a sudden Macs have malware. I've seen the attack that's rampant, and it gave me a sick feeling, because it reminded me of the reason I switched back to the Mac in 2005. Windows had become a horrible mess of malware. Microsoft's position was the same one that Apple is now adopting. Leave the users, most of whom think they're immune to malware (Apple told them so!), to fend for themselves.

They should do a business school case study on this kind of lunacy.

Nowadays, Microsoft very much takes responsibility for keeping Windows machines defended against malware. The malware problems that caused me to stop using Windows are not gone, but the defenses appear to work. I have several Windows machines that (knock wood) appear to be malware-free and running smoothly. But that was too late to keep me as a Windows user on a personal level.

The situation with users and developers for the Mac are exactly the same. Apple is the strong party, they're the deep pockets, and they're the ones with the most to lose if developers can't develop for and users can't use their products.

PS: A good idea for a startup might be a virus scanner for the Mac. Sad, but that's where we are now. And let's hope no patent troll sues them for it.

Stereotypes in software

Stereotypes in software

I'm lucky to be involved as a mentor in TechStars/NYC.

So far that has meant meeting with a few of their startups to give them my impressions of their ideas and execution, for whatever it's worth.

I remember well sitting on their side of the table, although when I was their age, the process was less formal. However, when I was starting up I knew a lot of the stuff we were improvising would eventually be standardized. I always believed in the incubator idea even when it was out of fashion, even when it was considered "disproved."

Why, as a creative person, did I have to become a corporate executive? That was a mistake. Good software, like anything creative, is made by people who focus on product, not business. Managing a company, raising money, dealing with crises of all kinds, took me away from the thing I do best, and love, which is create.

But now, in the tech incubators and hackathons, I see another of these kinds of problems.

The people who play the role of mentors are older, and the people who play the role of startups are younger. For the most part the people judging are non-technical, they're not product developers. And the younger people must be product developers -- or there will be no product. (Of course they have other kinds of people on their teams.)

I'd like to see some of the ideas I'm working on get the incubator treatment. Starting companies may be something for the younger folk but I'm not willing to concede this any more than I would have conceded that product development is something exclusive them. Maybe there's a person who loves running businesses who started companies in his or her 20s and 30s but thinks they could do it better in their 40s, 50s and 60s? I wouldn't rule it out. Not everyone wants to be sitting in the mentor chair. Some people want to do. (Nothing wrong with mentoring of course.)

I'd like to see some of the ideas I'm working on get the incubator treatment. Starting companies may be something for the younger folk but I'm not willing to concede this any more than I would have conceded that product development is something exclusive them. Maybe there's a person who loves running businesses who started companies in his or her 20s and 30s but thinks they could do it better in their 40s, 50s and 60s? I wouldn't rule it out. Not everyone wants to be sitting in the mentor chair. Some people want to do. (Nothing wrong with mentoring of course.)

I'm disheartened that product developers aren't usually among the ranks of mentors and hackathon judges. In no other field is it like this. Do movie directors get their inspiration from financial people? I hope not (unless the movie is about financial people). Does the medical profession get its mentoring from doctors and medical researchers who have been there before? I hope so! When I was a math student, my teachers were mathematicians, not venture capitalists.

As someone with a boatload of experience, I will tell you that experience isn't everything, but it matters. I had my choice of mentors when I was young. The guy I settled on was a generation older, but a product guy who had been successful in business. We just gravitated to each other. And I had tried out a lot of other relationships before that.

But it would be wrong to assume that people in their 40s and beyond are not capable of creating breakthrough products. Some of us are.

Deadness

Deadness

April 1997: "The press only knows three stories, Apple is dead, Microsoft is evil, and Java is the future."

I was just looking through pictures from Esther Dyson's PC Forum in 1985. True, some of the companies and people there are dead. It's sad.

I was just looking through pictures from Esther Dyson's PC Forum in 1985. True, some of the companies and people there are dead. It's sad.

We're all a lot older, but hopefully wiser too. It was wonderful to see all the old faces. Those events were like summer camp for us.

Here's Steve Jobs and John Sculley looking happy.

Mitch explaining to Barlow and Lewin.

Mitch with his ever-present notebook.

John Doerr and Stewart Alsop. (Note 1992-era laptop.)

A crowd gathered listening to Bill Gates hold court.

Ray looking impossibly young. Ballmer looking like Ballmer.

JLG is dapper and French.

And Dan and Dan (these two guys eventually sued each other out of business).

The pictures were taken before Apple had been declared dead by the PC industry press. (The supposed cause of death, unwillingness to allow Mac clones.) It's true that at times things looked pretty dark for Apple, but as we all know, Apple didn't die. And it wasn't dead when they said it was dead.

The pictures were taken before Apple had been declared dead by the PC industry press. (The supposed cause of death, unwillingness to allow Mac clones.) It's true that at times things looked pretty dark for Apple, but as we all know, Apple didn't die. And it wasn't dead when they said it was dead.

So, younger tech press people, who were still in grade school when all this was happening (if that!) -- nothing has changed in your profession. You call yourselves bloggers now, but you're remarkably like the press people who came before you. Anxious to please the people who buy your ads, and stir up a controversy -- where there is none.

Just sayin! ![]()

Conversation on Twitter degrades further

Conversation on Twitter degrades further

There are many problems with trying to have a conversation on Twitter.

1. 140 characters can only express very simple ideas.

2. Assembling a chain of messages is hard work. Storify helps, but only if someone is willing to put in the effort. For most things it's not worth it.

3. Yogi Berra: "No one goes there nowadays, it's too crowded." For years social media experts have been writing columns saying the way to make Twitter pay is to "engage" with "key influencers." That means there's a lot of strategic engaging going on. In other words, spam.

3. Yogi Berra: "No one goes there nowadays, it's too crowded." For years social media experts have been writing columns saying the way to make Twitter pay is to "engage" with "key influencers." That means there's a lot of strategic engaging going on. In other words, spam.

4. And Twitter has inadequate defenses against spam. How can you tell if the person at the other end is real or a kid in Guatemala or Malaysia being paid by the message to engage with people to help build cred for some spam-issuing Twitter account? And there's no way to delete a tweet short of blocking the person who sent it. So, as spam gets worse, the block gets wielded more freely.

How dates work in the Flickr API?

How dates work in the Flickr API?

I'm doing some programming against the Flickr API and am having problems I've not seen before.

I uploaded a test picture to Flickr at 10:05:25 AM.

When I called flickr.photos.getInfo it said the photo had been uploaded at 2:05:25 PM.

All the dates it had for the picture were 2:05:25 PM, except for "taken" which was correct. Looking at the code, that's the only date that's not transmitted as a Unix date. Its format is like this: 2011-05-19 10:05:25.

So the Unix date that Flickr sends back, 1305813925, is three hours ahead. What accounts for those three hours?

This is the code that converts a Unix date to an internal date:

date.set (1, 1, 1970, 0, 0, 0) + number (unixdate)

I'm thinking it has something to do with the three-hour time diff betw NY and Calif?

Puzzling...

How I worked around the problem

As Jonas explained in the comments, Unix dates have to be UTC-based, and ours are not. So there's a mis-match there. Fixing the encoder, which could easily be done, has the potential of breaking a lot of other apps. There are ways to work around that too, but I'd rather not go there, because I believe I found a workaround.

When I call Flickr to get new photos, as I loop over them, I compare the upload date of the photo to a previous high-water mark. If it's greater, I reset the mark. Every time I search for new photos, I ask for all the photos since the mark. I don't even bother looking at it, dont' care if it's in the future in my timezone or the past. I just care that it's what Flickr thinks is the correct time.

What led me to this was a wish that they would just send a token back with the search results that I could send back to them next time. Then they could worry about what it means, and my only job is to store it along with the images I have. I realized I could treat the upload date as if it were such a token. I'm running a full backup now, and will let you know if it worked, but my initial experiment suggests that it will.

Update: It seems to work. ![]()

Test post

Test post

I've made some changes to Scripting2, the software that I use to publish this blog. The purpose of this post is to see if I've broken the software. Let's hope not! ![]()

If you can read this that means that not only can we still create new posts, but it's possible to edit them after they have been created.

Happily all seems to work as previously.

Why outlines work

Why outlines work

Had lunch the other day with a programmer friend, a very accomplished and smart and thoughtful guy. A studious reader of this blog, I think for about two years, who's trying to figure out the shape of the software I'm working on.

Had lunch the other day with a programmer friend, a very accomplished and smart and thoughtful guy. A studious reader of this blog, I think for about two years, who's trying to figure out the shape of the software I'm working on.

I am swinging back to outlines now, and finding they have new relevance, and that I understand things I didn't understand before. So that's what I'm talking about these days.

However, my friend the programmer says he's missing the context, because I never write about outlines. He's right. I stopped writing about them a long time ago, before the web and blogs. So there's no body of writing to explain why they work.

I'm going to skip all the history for now, and just explain outlines in the 2011 context.

It feels like I manage hundreds of "sites" where I accumulate or hope to accumulate lots of ideas, record events, store pictures, etc. As much as I have trouble keeping up with what everyone else is writing, I feel that what I have created is even more out of control.

So I want to organize and simplify and make it easy to find things, and when I spot a mistake on a blog post or a howto, or want to add a note to a picture, I want to do as little work as possible to find the source text, make the change and save the result.

Outlines are rapidly becoming the way I do that.

Until recently I only used outlines to write individual articles like this one. And of course to write code, and manage object databases (Frontier allows me to edit almost any structure in the outliner, and programs and their data are well-modeled as hierarchies.)

I've figured out how to do the next-up level, to manage collections of sites, in one document that I can search quickly and navigate, and easily reorganize structurally. And where linkrot was always the result of restructuring in the past (one of the reasons I didn't use outliners to organize my entire net presence), I now have a solution for that. It was possible to do it years ago, but I didn't think of it. That I'll write about in more detail when I'm ready to release an app that anyone can use for this purpose. But the feature already has a name (sorry for the tease -- no I'm not).

I've figured out how to do the next-up level, to manage collections of sites, in one document that I can search quickly and navigate, and easily reorganize structurally. And where linkrot was always the result of restructuring in the past (one of the reasons I didn't use outliners to organize my entire net presence), I now have a solution for that. It was possible to do it years ago, but I didn't think of it. That I'll write about in more detail when I'm ready to release an app that anyone can use for this purpose. But the feature already has a name (sorry for the tease -- no I'm not). ![]()

In the true spirit of a bootstrap the link to its name is an instance of itself.

Programmers love recursion. It's the rabbit we pull out of our hats. ![]()

Why Microsoft bought Skype (Fanciful)

Why Microsoft bought Skype (Fanciful)

Just occurred to me that it's possible that Microsoft bought Skype for its potential to route-around the telcos. Or to put pressure on them to get better deals. Or it's possible that behind-the-scenes they weren't getting enough love from the telcos, who see Microsoft as a second-tier player in mobile comm (as do the rest of us) and that owning Skype was a good way to get their attention.

If you look at it that way, it's a not too bad a deal for Microsoft. (Verizon's market cap is $104 billion, AT&T is $184 billion, Sprint is $15 billion.)

What made me think of this is that Bill Gates has said he was a big supporter of the deal. Then I thought back to a speech that Gates gave in 1981 in Palo Alto, just after the IBM PC came out. It was a pretty big speech, though at the time no one made a big deal about it.

Here's what he said (I'm paraphrasing, from memory): "I know the history of computers. Microsoft will be a huge company some day, and when we are, we'll tend to be like all the other big companies. And some upstart will come along and challenge us the way we're now challenging the leaders of the computer industry. But I will remember what it's like to be an upstart, and I won't let them have the advantage."

He probably wasn't quite that clear about it, but that was the point. And we know who the upstart was -- Netscape. Once he vanquished them, he relaxed, and let Google do the job Netscape was trying to do. And now Bill Gates looks like every other computer mogul to come before. Rich, but on his way to being forgotten.

He probably wasn't quite that clear about it, but that was the point. And we know who the upstart was -- Netscape. Once he vanquished them, he relaxed, and let Google do the job Netscape was trying to do. And now Bill Gates looks like every other computer mogul to come before. Rich, but on his way to being forgotten.

Except Gates is still around, and if he was that history-aware when he was young, why wouldn't he still be -- only more so. Now he has all the experience he didn't have then. He's a voracious reader, now he has 30 years of book-reading that he didn't have when he was in his 20s. So If you're Gates, and you're watching your creation flounder into irrelevance, what do you think of doing? Disrupting, of course. ![]()

I floated this idea on Twitter, and people say that they don't think Microsoft is capable of doing anything but supporting the status quo. That's cause they're thinking of Ballmer's Microsoft. But Ballmer does not equal Gates.

Anyway, okay I know this probably isn't true, but it's often good to let your mind relax assumptions, and play What If.

On the other hand, the original potential of Skype, if you can remember back to its inception (I can) was that it would disrupt the telcos, the same way Netflix is disrupting the entertainment business. If Gates can somehow keep the mess that Microsoft has become from interfering with the opportunity, then he could still do some disrupting before heading off the to the Old Software Dudes farm. ![]()

Update: Kevin Fox blogged this before I did. Smart guy! ![]()

RSS and CSS and Zeldman

RSS and CSS and Zeldman

Over on Zeldman's blog, a post with a heartbreaking title.

I wish these guys would stop and think before indulging in sensationalism.

Look, Twitter and Facebook are bad for everything we, the guys who came before, hold dear.

At first, Twitter looked like it was going to be a friend of the open web, possibly because it was started by people who came from our world. Or possibly because that was good marketing. No matter, the people who run the show at Twitter today have no love the free-for-all that is he web. The one that allowed writers, geeks and designers to work together on wonderful collaborations called blogs.

At first, Twitter looked like it was going to be a friend of the open web, possibly because it was started by people who came from our world. Or possibly because that was good marketing. No matter, the people who run the show at Twitter today have no love the free-for-all that is he web. The one that allowed writers, geeks and designers to work together on wonderful collaborations called blogs.

To Zeldman, I left a comment that hopefully puts it in perspective.

The same fate you've left to RSS applies equally to your beloved CSS. Your CSS skills will go the way of COBOL programming if Twitter and Facebook replace the web. If you don't want that to happen, support technologies that preserve choice. Like RSS.

Tumblr and my Blork feed

Tumblr and my Blork feed

As you probably know, I'm using my new Blork tool to do all my link publishing these days. My links flow, through RSS, to other Blork users. And to Twitter, of course. And now they flow to a new place: Tumblr.

I wanted to do this for three reasons:

1. More and more people are using Tumblr.

2. It's beautiful.

3. It seems uniquely suited to link publishing. It even has a link type post. And the templates account for them.

My Blork-to-Tumblr connection is working except for two things:

1. The order is all screwed up. Every time I post a new item it's like shuffling the deck.

2. The dates are wrong on the posts. They all say May 16. Even though most of them were published on May 15.

I know that Tumblr has them in the correct order in its database because when I go to the dashboard they are in the right order.

I see that other people are having similar problems.

PS: My links flow to Tumblr through its API. I'm not using their RSS import feature.

Update: The problem was mine. My (new) code had a bug -- it was updating each of the last 20 items every time it checked. Once it settled down to only updating when the items changed -- and that's rare -- everything worked as you thought it should. So it was my problem, not Tumblr's. ![]()

Domain-mapping is as nice as I hoped

Domain-mapping is as nice as I hoped

I've wanted to use the Internet's domain name system as a way to bookmark sections of outlines, feeds, maybe even smaller more atomic things.

I've wanted to use the Internet's domain name system as a way to bookmark sections of outlines, feeds, maybe even smaller more atomic things.

I think a domain name should be an easy, almost casual thing to allocate. Like the dialog that pops up when you Save a file for the first time.

A domain name should be like a file name. That easy. It's kind of amazing that the Internet has gotten this far without anyone trying to simplify such a central concept.

It feels like another idea, from 1999, called Edit this Page. "Writing for the web is too damned hard," it began -- and then explained how we had simplified it. That piece was seminal. It led to the software that led to blogging. It all happened very fast after that.

Now the question is how to put together all the pieces we've created since then. Feeds, outlines, status messages, social networks, photos, videos. Taking complex things, bundling them and giving them simple names. Then doing more combining, relating and bundling.

So I was working on domain-mapping for the world outline. I want to be able to give a name to a node in an outline. Then you can jump to that name in your web browser, where you see just that node and its subordinate material. Very simple. But giving it a name, that was still a matter of going to the domain registrar and wading through a complex set of dialogs.

Today, the innovation was that I was able to enter the name in a dialog and the software took care of all the michegas, quickly. Save the outline and a second later view the new domain in my browser. Bing!

To experiment we used a fun domain I had lying around, not doing very much.

I plugged my photo archive into it, and then gave April 30, 2011 a special name:

Damned if it all doesn't work! Just like that.

It's hard to show how that was simplified. Here's another address that gets you to the same place. The content is the same, but the context is way different.

We have more work to do. I want to hook this up to dnsimple, they have a nice API, and they reached out to us early-on.

We have to hook it up to RSS too. And then there are a lot of new concepts to explore. We now have a way of talking about sub-outlines without paths (and the linkrot they inevitably cause).

Mississippi River heading into Atchafalaya

Mississippi River heading into Atchafalaya

At the suggestion of Doc Searls last year I read a book by John McPhee about the changes coming to the Mississippi River.

Seems the changes he was talking about could be happening now.

McPhee wrote a long New Yorker article that's a short version of the book. Contains the basic info you need to understand the drama.

The Times reports today that the Army Corps is will open the spillway at 3:30PM Eastern with live video.

Here's a great Google Maps view of the spillway. Try looking at it in street view! Fascinating.

The spillway has been opened only once before, in 1973.

Here's the scoop. Every thousand years or so the Mississippi River changes course radically, and starts building a new piece of the delta. Physics causes this. The water is always seeking the shortest and fastest route to sea level, the Gulf of Mexico. Over time the river deposits silt that it has to flow over, causing the route to be longer and slower. Eventually in a spring flood it rises over one of its upstream banks and digs a new channel and flows in a different direction.

Here's the scoop. Every thousand years or so the Mississippi River changes course radically, and starts building a new piece of the delta. Physics causes this. The water is always seeking the shortest and fastest route to sea level, the Gulf of Mexico. Over time the river deposits silt that it has to flow over, causing the route to be longer and slower. Eventually in a spring flood it rises over one of its upstream banks and digs a new channel and flows in a different direction.

Well, the most recent milennium was over around 1950 or so. Since then the US Army Corps of Engineers has been at war with the river, trying to keep it flowing past Baton Rouge and New Orleans, and all the industry that's built up around the cities. And away from places where people live now, that would be underwater if the river changed course.

The Army's position is that it can't lose this war or the US economy will be severely disrupted. But a lot of people believe it's inevitable that the river will eventually win.

This year's flood may be the moment. If the river tops the levees in the place the river is trying to shift direction, it's going to be super-hard if not impossible to get it back into its current banks.

What times we live in! ![]()

Twitter-only reporters? Yes, of course

Twitter-only reporters? Yes, of course

Lauren Dugan asks if we'll see Twitter-only reporters soon?

And the answer is yes, we will -- of course.

If we don't already have them something is wrong.

Last year I was looking out my window on Bleecker St in the West Village and saw a huge plume of smoke off in the distance. Within five minutes, through Twitter, I knew exactly where the fire was, and had seen pictures taken by people on the scene.

People working at a local TV station couldn't possibly have gotten a reporter and camera there that fast.

This prophecy goes all the way back, for me, to Salon boasting that they were the first web-only news organization to send a reporter to Bosnia. Big deal, I thought at the time (and wrote) -- the web is not only in Bosnia but in every other place where news is happening. The days when we had to send a correspondent to the scene to cover a story are fading fast. The Internet reaches everywhere.

This prophecy goes all the way back, for me, to Salon boasting that they were the first web-only news organization to send a reporter to Bosnia. Big deal, I thought at the time (and wrote) -- the web is not only in Bosnia but in every other place where news is happening. The days when we had to send a correspondent to the scene to cover a story are fading fast. The Internet reaches everywhere.

I'm not saying there isn't something to be gained from going to new places and reporting on them with fresh eyes -- there is. Seeing Queensday for the first time at age 55 creates a different impression from that of people who grew up in Netherlands. Perspective is important. Having someone who's like me observing on my behalf has value. With the caveat that it isn't often that the commercial news vendors actually do that.

Next question! ![]()

Downloading my videos from Flickr

Downloading my videos from Flickr

I'm working on getting my Flickr pictures to flow to an S3 bucket via their API.

It's all been working pretty well until I realized I needed to check on the videos, and see if I needed to do something special with them.

Most of my Flickr uploads have been JPGs, but there are a few MP4s.

Near as I can tell, there is no way to actually download the videos. I could of course be missing something.

PS: It seems from this thread there was at one time a way to do it. Also on the Flickr developer blog.

Does this exist? Easy-Linux-EC2

Does this exist? Easy-Linux-EC2

I don't have a specific need for this right now, just wondering if it exists.

1. A machine image for Amazon EC2.

2. A flavor of Linux, with basic server stuff running (Apache, FTP).

3. Pre-installed graphic user interface shell.

Anticipating that people will say I can install whatever shell I want: I know that. I'm interested in something that competes with Windows on S3 without Windows. And if this doesn't exist, it should. ![]()

Sadly, this guy is probably right

Sadly, this guy is probably right

This appeared as a comment to my previous post about Dick Cheney and Mitch McConnell. In my heart I know he is right.

This appeared as a comment to my previous post about Dick Cheney and Mitch McConnell. In my heart I know he is right.

"They don't have the guts to actually do anything. They just want to make enough noise that they can hold it up as banner during the next election. Hopefully they can make enough noise to get everyone to forget that they caused this problem as much as anyone.

"The world economy is already trash, the US is already insolvent, the system now only runs on debt. Everyone knows this which is why no one calls our bluff.

"But this is only about getting re-elected so they can stuff their pockets more. No one is going to try and fix things because things are unfixable."

Mitch McConnell channels Dick Cheney

Mitch McConnell channels Dick Cheney

There's so much to despise about Mitch McConnell, it's hard to know where to start. It's a little overwhelming. So let's start with something small.

One little savory sleight-of-hand...

Remember when Dick Cheney went on Meet the Press and said that the NY Times was reporting (that very morning) that there were WMDs in Iraq (they were importing "aluminum tubes" supposedly used in centrifuges to create nuclear weapons). The story was leaked to the Times by Cheney. And published just in time for him to quote them on NBC.

Remember when Dick Cheney went on Meet the Press and said that the NY Times was reporting (that very morning) that there were WMDs in Iraq (they were importing "aluminum tubes" supposedly used in centrifuges to create nuclear weapons). The story was leaked to the Times by Cheney. And published just in time for him to quote them on NBC.

Yesterday Mitch McConnell cited Standard & Poor's lowering its rating of US debt as a sign we're headed for trouble. Even if you took their report seriously (the market didn't), isn't it funny that the reason they cite for the lower rating is the hostage-taking of the Republcans. I mean if McConnell would just STFU and act like he's the minority leader of the Senate for crying out loud, there wouldn't be a crisis and there would be nothing for S&P to get nervous about.

What the Republicans are doing shouldn't work. They are being so open about their willingness to sacrifice the world economy so they can gut Medicare. It's sooo insane. It's enough to cause another country to declare war, don't you think? Justifiably. I mean what's our excuse? Isn't the Republican Party part of the US? If the US government trashes the world economy, isn't the US responsible?

We expect we'll muddle through, I guess. What will we be expected to muddle through next year?

Web 2.0 expiration date, Day 2

Web 2.0 expiration date, Day 2

A couple of thoughts.

1. I'm revitalizing my scripts that backup Flickr. I had them working a few years ago and then for some reason let them fall into disrepair. Not good. When I upload a picture to Flickr it should automatically be backed up within the hour to a local hard drive and to my S3 archive.

2. Whenever I start this thread (I've been doing it for years) someone asks about my Disqus comments. They're right. That's a Web 2.0 service. I don't have a backup of the comments people post.

2. Whenever I start this thread (I've been doing it for years) someone asks about my Disqus comments. They're right. That's a Web 2.0 service. I don't have a backup of the comments people post.

If I wanted to switch I could probably manage it so that the old posts keep their Disqus comments and the new ones get the new brand. But that doesn't help in the event that Disqus shuts down, or adds a new term to the user agreement that I can't abide by, or is bought by a company I don't trust.

However, we would be totally covered if Disqus had the option to store the text of the comments in a structured format (XML or JSON, I don't care) in an S3 archive that I give it permission to write to. That way I don't have to do anything to be backed up. Such a simple idea, and what a marketing advantage they would offer over every other way of writing for the web.

Lock-in always becomes a big issue for users, in every cycle. We're approaching that point in Web 2.0. Imho.

12.31 mile ride

12.31 mile ride

When I'm riding north and feeling great and thinking maybe I'll just make a dash to the George Washington Bridge, that can only mean one thing.

Tail wind! ![]()

And it gets me every damned time.

I was sailing along, mile after mile, feeling no pain and soaring and weaving and rolling along.

Once I stopped I could feel it was more than a light breeze. More like a stiff wind.

Once I stopped I could feel it was more than a light breeze. More like a stiff wind.

Needless to say I got some gooood exercise on the ride south! ![]()

Feeling great at the end of the ride. Drinking cold water and thinking about what I want for dinner.

Here's the map courtesy of Cyclemeter.

Web 2.0 must have an expiration date

Web 2.0 must have an expiration date

Quick post on another busy programming day...

Quick post on another busy programming day...

Someday the pictures you store on Flickr will go the way of videos stored on Google Video.

Somday the text you wrote on Quora will have similar terms and conditions to the ones that Twitpic scared us with.

Someday the stuff you're storing for free in the cloud will not be free. Maybe it's already not free.

It pays to plan for someday.

No time like now. ![]()

Straight talk about Twitter/Facebook and flows

Straight talk about Twitter/Facebook and flows

Twitter probably will eventually shut off the flow of tweet text coming out. This is consistent with everything I've seen. I never expected Facebook to make text coming out of Facebook easy to move to other places.

Twitter probably will eventually shut off the flow of tweet text coming out. This is consistent with everything I've seen. I never expected Facebook to make text coming out of Facebook easy to move to other places.

If Twitter were to make this change, it would be equally hard on HTML and HTTP, and for that matter JSON or JavaScript. Or Perl and Python. Basically it would shut off open access to their content flow. All tools and people who are experts at using those tools suffer equally.

But I don't see why we should care. The good stuff is already outside of Twitter and flows into it. As long as we keep that going, then Twitter will keep the pipe open in the incoming direction -- they have to because without it there would be very little to see

Unless I'm missing something big, they're a lot more dependent on us for content than we are from them. And by "us" I mean bloggers and news people. Writers of Internet news and perpsective. Gatherers of noteworthy stuff. Curators, photographers and people with eyes and ears.

Pay-to-speak conferences, day 2

Pay-to-speak conferences, day 2

Of the hundreds of tech conferences, only five, Mesh, PaidContent, BlogHer, 0redev and Gluecon responded to the question in the first Pay-to-speak piece, all saying that they didn't do it. That leaves us to wonder about the others.

Of the hundreds of tech conferences, only five, Mesh, PaidContent, BlogHer, 0redev and Gluecon responded to the question in the first Pay-to-speak piece, all saying that they didn't do it. That leaves us to wonder about the others.

I think most of the others do it, or have thin excuses that somehow, to themselves, justify it.

For example, Jason Pontin of Technology Review writes, via email: "Our conferences aren't 'pay-to-speak,' but sponsors are now fairly immovable on this issue: today, they won't sponsor without a speaking slot."

Yes, I'm aware of that.

He continues: "We get around it the same way TED does: we invite potential sponsors to sponsor a lunch. It has the benefit of transparency, and (we think) better serves our event's attendees: insofar as a sponsor speaker has any value to attendees, he or she probably has more value if they speak openly about their products and services, rather than presenting themselves as 'thought leaders.'"

Not sure what I'm missing, but that seems to me, in every way, to be pay-to-speak.

PS: Some conferences spell out the connection between sponsorship and speaking, in writing.

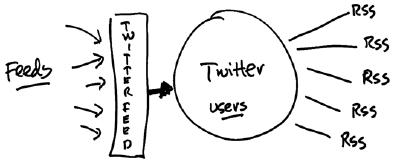

How RSS flows through Twitter and vice versa

How RSS flows through Twitter and vice versa

There's some discussion today about Twitter and its RSS support.

I have some information to add to the discussion.

1. As far as I know all users have RSS feeds. The URL for my feed:

http://twitter.com/statuses/user_timeline/3839.rss

I assume that's because I was the 3839th person to sign up for an account on Twitter. If you have a Twitter account you have a feed. If you want to find it, you can use the Twitter RSS bookmarklet.

Personally, I don't subscribe to anyone's Twitter feed in my RSS aggregator, and I'm a systematic user of RSS (of course). Twitter feeds are not generally news-heavy, and that's the primary reason I use RSS. Adam Curry tried an experiment and subscribed to all the Twitter users he follows in Blork and wrote up the experience.

2. On the other side, much of the news you get in Twitter flows through TwitterFeed from RSS and Atom feeds. You can check that out, I have. If you're seeing news in Twitter, it's very likely coming from RSS.

As to why people spread disinformation about RSS in tech blogs, I have no idea why they do it. You should ask them. ![]()

Pay-to-speak at tech conferences

Pay-to-speak at tech conferences

It's an open secret in the tech industry that if you buy a conference sponsorship your company gets a speaking slot in return. These speeches are not labeled as ads. Of course that ruins any transparency they might hope to have.

It's an open secret in the tech industry that if you buy a conference sponsorship your company gets a speaking slot in return. These speeches are not labeled as ads. Of course that ruins any transparency they might hope to have.

I found out about this when I ran the first BloggerCon at Harvard in 2003. I asked for sponsorships from some of the biggest names in the tech industry, and was told by each of them that they required a prime speaking spot as quid pro quo. I said maybe they could sponsor a meal, and speak at it, but I'd have to label them as paid speaking slots. I was told that was not acceptable. I told them to keep their money. We'd find a way to make it work without sponsors, and we did.

I was an adviser for another conference that I won't name. I went to several all-expense-paid meetings. Until it came up that their sponsors got speaking slots as a quid pro quo. Not disclosed to the participants. I didn't go to another meeting (and I didn't make a stink about it).

I'd love to hear from each of the tech pubs that run conferences that they don't do pay-to-speak. I suspect you won't hear most of them say it because they do it. That's how they make money from the conferences, which are really, mostly sales events for the sponsors.

Conferences that do not do pay-to-speak: Mesh, PaidContent, BlogHer, 0redev, Gluecon, IIW.

Journalist or not? Wrong question

Journalist or not? Wrong question

DougSaunders: "Journalist is a word like runner, not like engineer. Any citizen who chronicles surroundings is a journalist."

If you swim you're a swimmer. If you keep a journal you're a journalist.

That's why fights over who's a journalist or not are pointless.

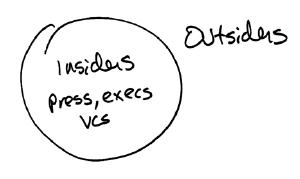

However, there is a line that is not pointless: Are you an insider or a user?

Insiders get access to execs for interviews and background info. Leaks and gossip. Vendor sports. Early versions of products. Embargoed news. Extra oomph on social networks. Favors that will be curtailed or withdrawn if you get too close to telling truths they don't want told.

All the people participating in the "journalist or not" debate are insiders. They are all compromised. Whether or not they disclose some of these conflicts, none of them disclose the ones that are central to what they will and will not say.

Then there are people who are completely outside the line. They pay for the products they use. They get new products when they ship. They never get embargoed news. They get their followers "organically."

If you want to know if a product works as advertised, people outside the circle are trustworthy. They might not be right, but at least they have no reason not to tell you what they think. People inside the circle are telling you a special version of the truth. This means they might tell you a product works when it doesn't.

Understanding Google

Understanding Google

I've been a Google watcher for as long as there has been a Google.

My view has been almost entirely from the outside. I always have a model of what it's like on the inside, but there are obvious contradictions between my belief and reality. In the early days, I thought Google really grokked the web. Contradicting that is their almost complete obliviousness to blogging (or so it seemed). That users were turning into creators at an every-increasing pace didn't seem to penetrate.

These days, like a lot of others, I see Google as a Microsoft that's rooted in servers instead of clients. Like Microsoft, they tend to over-reach in products, seem unable to start small and bootstrap their way to bigness in any product category. Unlike Microsoft, Google often ships their mistakes, where Microsoft killed them before they reached the market (thinking of Blackbird, Cairo, Hailstorm). Maybe that's because in Microsoft's day shipping had real physical costs. You had to fill a distribution pipe, train retailers and support people. With Google they just invite Scoble in for a demo (figuratively) and the rest is taken care of by the press and bloggers. They just have to keep the servers running (no small feat, of course).

These days, like a lot of others, I see Google as a Microsoft that's rooted in servers instead of clients. Like Microsoft, they tend to over-reach in products, seem unable to start small and bootstrap their way to bigness in any product category. Unlike Microsoft, Google often ships their mistakes, where Microsoft killed them before they reached the market (thinking of Blackbird, Cairo, Hailstorm). Maybe that's because in Microsoft's day shipping had real physical costs. You had to fill a distribution pipe, train retailers and support people. With Google they just invite Scoble in for a demo (figuratively) and the rest is taken care of by the press and bloggers. They just have to keep the servers running (no small feat, of course).

But I still never understood the process that led to failures like Buzz and Wave. That is, until I read this post by Douwe Osinga, who recently departed Google. He seems to have kept his perspective outside as well as inside during his tenure.

Highly recommended reading, esp Google thinks Big and Google has a Way.

Finding the archive of a feed

Finding the archive of a feed

I follow several topics on StackOverflow in my RSS river, including one on RSS itself. There's a pretty good flow, and some questions are repeats, and from those I learn where we might have done better in the past, and learn about problems we might try to solve in the future.

A very frequent question on StackOverflow is this: The feed for a site is useful for finding the last 20 or 50 items. How can I find the rest?

The answer is an unhappy one for most sites. You can't. So we've tried to address this issue, completely, in the minimal blogging tool I'm working on, also known as Blork. Hoping to provide an example for developers of other blogging systems. This is an idea that everyone should adopt, imho. Would make the web more useful.

In Blork, the feed is everything. There is only a very simple HTML rendering of your blog. Your feed is intended to be plugged into a lot of different places. If you want a traditional blog as output, we have Tool for that called rssToBlog (it works with Atom too, btw).

In Blork, the feed is everything. There is only a very simple HTML rendering of your blog. Your feed is intended to be plugged into a lot of different places. If you want a traditional blog as output, we have Tool for that called rssToBlog (it works with Atom too, btw).

Not only do we write your posts to a feed, but we also maintain a calendar-structured archive of all your past posts. Which begs the question, how do you find them?

The answer: look in the feed.

There's an element called <microblog:archive> that fully describes the archive. It tells you where to look, and the start date and end date of the archive.

And the feature is described on the docs page for the microblog namespace. (Which is still a draft, comments welcome.)

Nuremberg does not apply

Nuremberg does not apply

On Twitter, numerous people recall that the US insisted on trials for Nazi war crimes at the end of World War II. They cite this as precedent that says that Bin Laden should have been similarly tried. But they're mixing things up too much, the situations are not comparable.

Suppose one of the Nazis had escaped or been acquitted. There was no danger of them re-opening Auschwitz or invading Poland again. Germany was defeated and Europe was in ruins. The next thing to do, no matter what, was to rebuild Europe. At most the Nazis could have escaped justice, and therefore would fail to serve as an example for future would-be war criminals. The Nazis were done, and Germany was knocked out.

Pretty sure I don't have to finish this, but just in case. Trying Bin Laden would have created an unprecedented spectacle, and would have given him control of everything until there was a resolution. Bin Laden was a master of media. He would have known what to do with such an opportunity. And since we know his intentions were to kill Americans, that can't help but mean more dead Americans. Maybe a lot more dead Americans.

As a dead man, we hope he remains silent. But that's not likely. He almost certainly left some kind of message to be played on his death. Maybe many. But at least now it's finite.

We did the right thing by killing him. Sometimes being perfect costs too much. This is one of those times when settling for imperfection was the correct choice.

Feature requests from an iPad-using Blorker

Feature requests from an iPad-using Blorker

A simple feature request for people who run news sites.

Make sure that the title of each page contains the title of the story that's on the page.

An example of a site that doesn't do this -- the mobile version of Gawker stories. When I'm reading one of their stories on my iPad and want to push it to my followers, the entry box is pre-populated with the title. Which is always the same. "Gossip from Manhattan and the Beltway to Hollywood and the Valley." No matter what the story is. Not good.

Here's a screen shot.

Another peeve is sites that give me a special version of a page for my iPad. That's not necessary or even welcome. The iPad has a full-size web browser. I want your normal version. Another feature request for Gawker. Don't treat the iPad as if it were an iPhone.

Another peeve is sites that give me a special version of a page for my iPad. That's not necessary or even welcome. The iPad has a full-size web browser. I want your normal version. Another feature request for Gawker. Don't treat the iPad as if it were an iPhone.

Especially irritating are wordpress.com sites, which put up a Flipboard-like interface. I know I can turn it off, but I don't want to be bothered knowing how to do that. I just want to read the news, not be impressed by your programming prowess, or compliance with the latest Silicon Valley fad. I went to the blog to read what they had to say. I find it especially irritating when they do it to one of my own sites. That I pay them to host. Without my asking for it.

One more thing, Apple's insisting that Flash die clearly isn't working. As an iPad user, I miss a fair amount of stuff I care about. Yeah, it's too much trouble to get up and go to my desktop computer just to see what the video says. At some point Apple is going to throw in the towel on this one. Sooner the better.

Did the Pakistanis know Bin Laden was there?

Did the Pakistanis know Bin Laden was there?

The question raised in the title of this post is fairly naive. He was in the middle of a Pakistani military city. They knew, and they don't mind if you figure it out. It won't be acknowledged in the media. Since they're mostly corporate-owned, no one will ask the question in any meaningful way.

The question raised in the title of this post is fairly naive. He was in the middle of a Pakistani military city. They knew, and they don't mind if you figure it out. It won't be acknowledged in the media. Since they're mostly corporate-owned, no one will ask the question in any meaningful way.

The more interesting question is how long did the US govt know he was there, and how did they pick the moment to kill him?

Before you rush in and say "It was political" -- as the Republicans are -- of course it was political. Everything about Bin Laden, on all sides, is and was political.

And if you accept the US story on its face, we could have gone in there at any time in the last few months. Why now?

Well, if you were the President, would you have killed Bin Laden in the middle of the revolutions in the Middle East? Wouldn't have been very good timing. Might have hit the reset button on all that. Much better to let it run its course. Bin Laden wasn't going anywhere. Not with the Pakistanis having him so well controlled.

When did the President need the rhetorical high ground to win the war with the Republicans? Who did he fear more, Bin Laden, who was cornered, and controlled by a US ally, Pakistan, or Mitch McConnell, Eric Cantor and the Koch brothers? Again, we don't have a choice but to take things at face-value. Obviously the Republicans were doing a lot more damage to the US than Bin Laden was. A good President picks his battles and fights them on his own terms. Could the President use Bin Laden to win the war with teh Republicans? Well, duh. (Hello.)

And it's working. The Republicans are retreating on all fronts. (But that's probably all theater too, the real war is much more complicated than the battle between two political parties that report to the same multi-national corporate bosses.)

Bin Laden knew it was over for a long long time. According to reports he didn't leave the compound for five years. He probably couldn't get out if he wanted to. He didn't have Internet or phone service. His only connection to the rest of the world was through a courier, and we knew all about that. Did Bin Laden know we knew? I think so. And there was nothing he could do about it. The man was cornered. And he was that way for a long time.

Bin Laden knew it was over for a long long time. According to reports he didn't leave the compound for five years. He probably couldn't get out if he wanted to. He didn't have Internet or phone service. His only connection to the rest of the world was through a courier, and we knew all about that. Did Bin Laden know we knew? I think so. And there was nothing he could do about it. The man was cornered. And he was that way for a long time.